This guide walks marketing and operations leaders through the specific features that drive retention and revenue and shows the KPIs, demo scorecard questions, and tradeoffs you should use to build a measurable shortlist. It explains how to evaluate the best customer engagement software for integration, omnichannel orchestration, AI personalization, experimentation, and compliance so you can pick a platform that scales without blowing up headcount. Choosing the Right Customer Engagement Platform for Scale starts with a focused checklist of capabilities, not vendor hype.

1 Unified Customer Profile and First-Party CDP

Bottom line: a reliable marketing program starts with one trustworthy customer record. Without deterministic identity stitching and low-latency event ingestion, even the best customer engagement software will send the wrong message to the wrong person at the worst time — and you will lose trust faster than you gain conversions.

What to expect from a first-party CDP and unified profile: persistent profile attributes, event history, resolved identifiers (email, phone, membership id), and a queryable store that updates in near real time. This is not a reporting cache — it must power decisioning for orchestration, personalization, and analytics across POS, booking systems, mobile, and web.

KPIs to validate during procurement

- Identity match rate: percentage of events that map to an existing profile across sources (goal: maximize for active cohorts).

- Profile update latency: time from an event (booking, payment, app activity) to availability on the profile store (real-world target: seconds to a few minutes).

- Duplicate profile reduction: measured before and after onboarding—tracks cleanup effectiveness.

- Profile completeness score: proportion of profiles with key attributes (phone, membership id, consent flags).

- False merge rate: frequency of incorrect merges — small numbers matter more than high match rates.

Demo scorecard questions to use live: Ask vendors, How do you ingest events from our booking system and POS? What is your typical identity resolution match rate and how do you report false merges? Do you support both deterministic and probabilistic matching, and can we tune thresholds? Also test the API by pushing a booking event during the demo and watching the profile materialize.

Trade-off to evaluate: aggressive probabilistic matching raises match rates but increases risk of incorrect merges that break loyalty programs and billing workflows. In practice, mid-market B2C firms are better off prioritizing deterministically linked identifiers (membership id, phone, email) and using probabilistic joins only for enrichment or cold-start modeling.

Concrete example: A multi-location fitness chain normalized membership id from the POS, booking records from Mindbody, and mobile app events. After mapping canonical ids and enabling sub-minute ingestion, they cut duplicate profiles by 45% and started triggering missed-class reengagement within 30 minutes of a no-show — a clear, attributable lift in class recovery revenue.

Vendor signals that matter: look for platforms that pair event collectors like Segment or RudderStack with a profile store (mParticle, Treasure Data) or an integrated option that exposes profile APIs. Check prebuilt connectors for your systems and Gleantap features for examples tailored to membership businesses.

Run a short POC that pushes 1,000 real events from your booking and POS systems, then request a dedupe report and profile latency metric. Claims on spreadsheets rarely match live ingestion behavior.

Practical next step: map your canonical identifiers and pick three high-velocity events (first booking, payment failure, class no-show) to validate end-to-end latency and correct profile resolution.

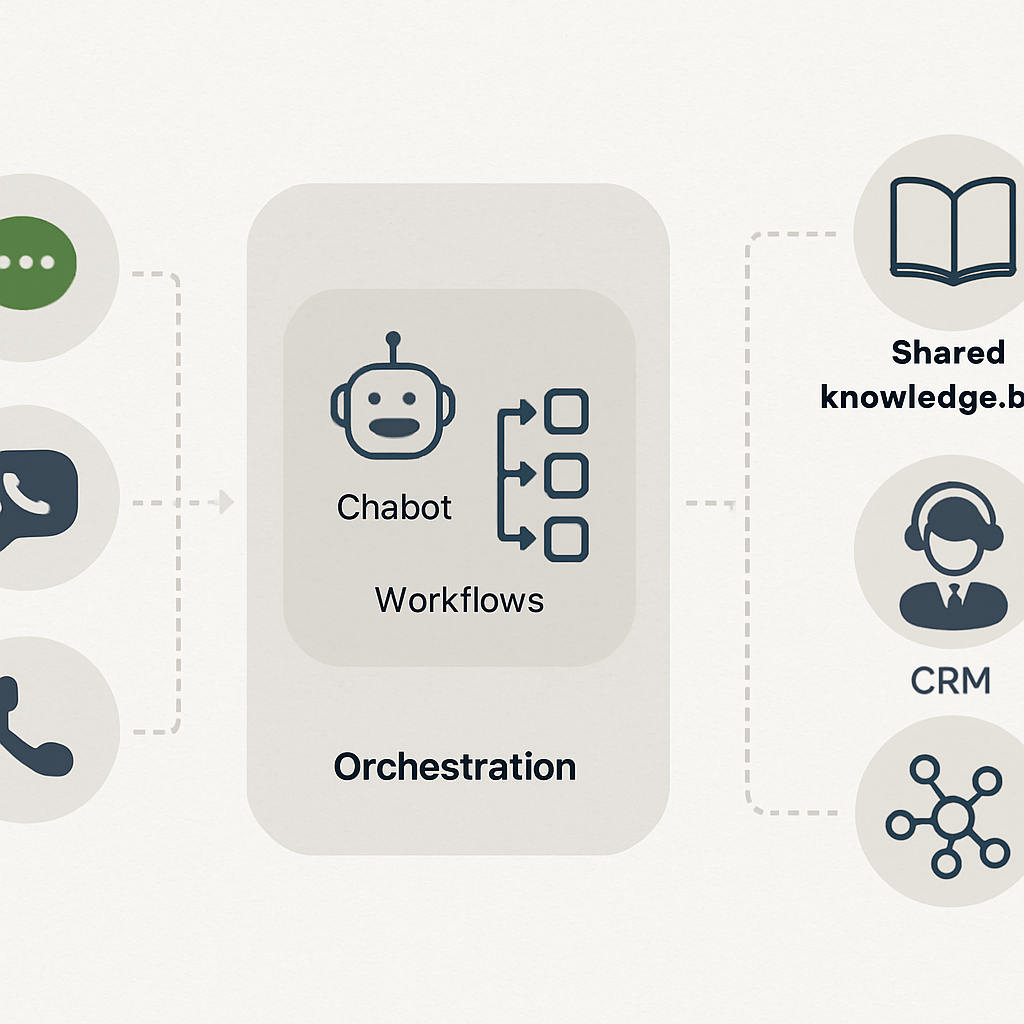

2 Omnichannel Orchestration and Native Channel Support

Bottom-line observation: Omnichannel success is not about checking every channel box — it is about predictable routing, coordinated throttling, and deterministic fallback so a high-value message arrives once, on the channel that produces the best outcome for that customer.

Native vs integrated channels: Native channels (built-in SMS, email, push, web messaging) give you tighter control over latency, delivery retries, suppression lists, and carrier relationships. Platforms that rely entirely on external providers via connectors can work, but expect higher operational overhead: extra API hops, separate dashboards, and inconsistent suppression behavior across systems.

How to evaluate the best customer engagement software for omnichannel

- Measure delivery SLAs: ask for p95 latency for transactional and campaign sends, not just average latency.

- Fallback success rate: what percent of messages fall back to an alternate channel within your configured window?

- Suppression consistency: does a single unsubscribe or DNC flag prevent sends across all channels instantly?

- Concurrency and throttling: messages per second limits and rate-limit handling during peak events such as flash sales or payment failures.

Practical trade-off: Choosing a platform with many native channels increases reliability and reduces integration work, but it often raises cost and vendor lock-in. If your team lacks engineering bandwidth, prefer a vendor that offers the primary channels you need natively and clear export APIs so you can escape later if necessary.

Concrete example: A multi-location studio chain used a marketing platform for email and push while sending SMS through a separate provider. During peak renewal season they accidentally sent duplicate reminders because the two systems did not share suppression state. They resolved it by moving SMS into the orchestration layer and implementing a single suppression API; recovery cut complaint rates and reclaimed staff time previously spent reconciling lists.

Operational considerations: Carrier rules and regional regulations change often — your vendor should surface carrier error codes, support automatic retries or alternate routings, and expose reporting for deliverability troubleshooting. Also confirm how the platform handles transactional versus promotional classification, since misclassification harms deliverability and compliance.

POC checklist: during a demo, trigger a high-priority transactional event and watch end-to-end behavior — delivery latency, fallback activation, suppression enforcement, and any UI or API gaps. Ask the vendor to run the same test for an international phone number if you have cross-border customers.

Demo task: Simulate a missed-payment event and verify the platform will: 1) pause promotional sends to that profile, 2) attempt SMS then fallback to email after your configured delay, and 3) log the decision path in the activity feed.

Vendor signals to watch: look for platforms that document channel SLAs, publish carrier-level error handling, and provide unified suppression APIs. Examples for orchestration patterns and programmable messaging include Twilio blog for messaging primitives and orchestration examples, and vendor implementations that bundle channels natively such as those described on the Gleantap features page.

Next consideration: If you must integrate external providers, insist on contract-level SLAs for delivery visibility and a tested export path for suppression and message history. That prevents the most common failure mode: silent duplicates and fractured customer experiences that erode trust faster than any single campaign can earn it.

3 AI-driven Personalization and Recommendations

Clear point: AI personalization returns the most value when it reduces decision friction for marketers — not when it replaces their judgment. Practical systems deliver targeted product, content, or action recommendations that are observable, measurable, and auditable across channels.

Systems to expect include real-time scoring for propensity (likelihood to convert, churn, or attend), item-to-user recommenders (next class, product, or content), and content selection layers that choose subject lines or images per user. The technical requirement is fast, reliable inference tied to first-party signals so a recommendation can be used instantly by email, SMS, web, or in-app workflows.

Vendor validation — what actually matters in a demo

Ask for demonstration artifacts, not promises. Request a live scoring of a sample of your profiles during the demo, and check latency, coverage, and why certain items were suggested. Verify the vendor exposes the input features used for each score and how you can access those features for reporting or downstream ML.

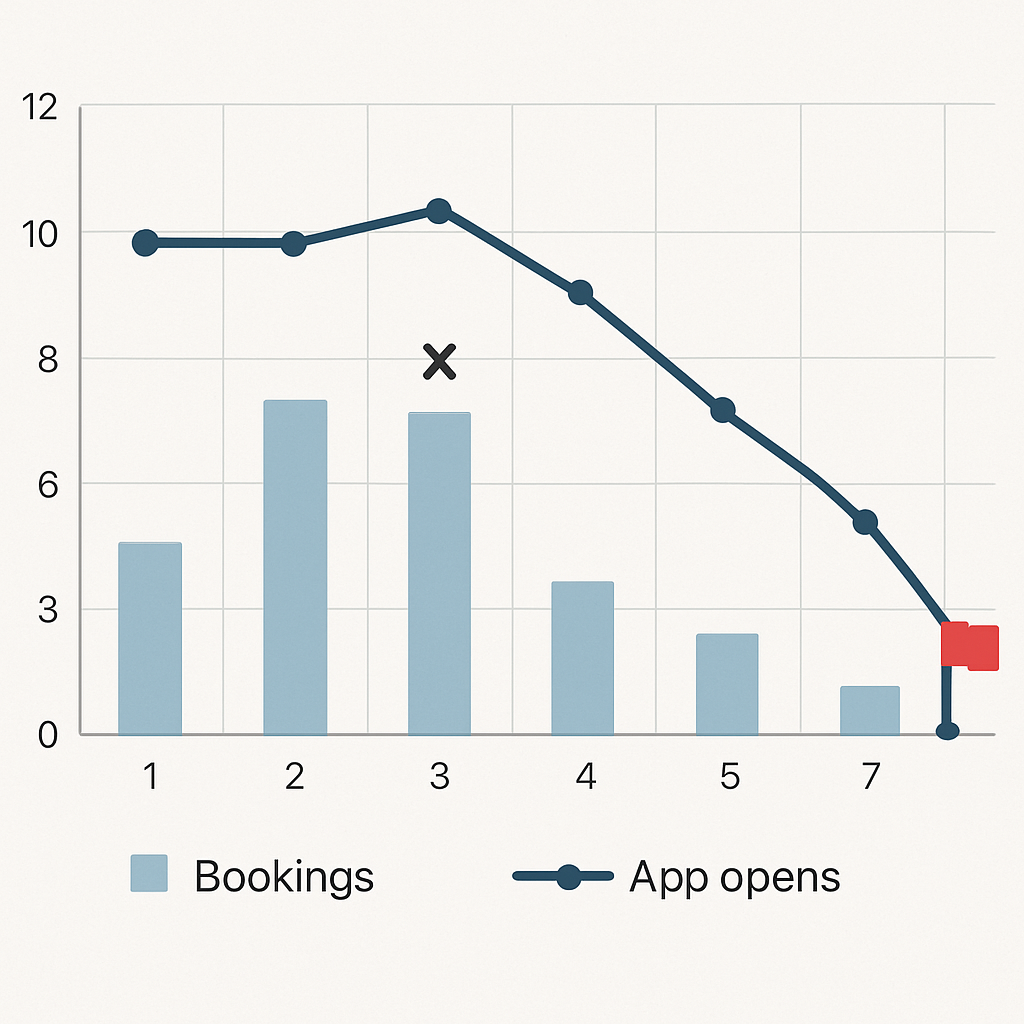

- Step 1: Map the signals you already collect (bookings, no-shows, payments, app opens) and identify two high-leverage outputs to model (eg, churn risk and next-class recommendation).

- Step 2: Run a short pilot that uses model outputs only to prioritize messages for a small segment, not to automate billing or critical flows.

- Step 3: Instrument incrementality tests (holdout groups) so you measure true lift versus correlation.

- Step 4: Require explainability: each recommended action must show the top three factors that produced it so business teams can trust and tune behavior.

- Step 5: Define an operational SLA for inference — p95 latency and throughput limits — and test it under expected peak concurrency.

Trade-off to accept: out-of-the-box recommenders buy speed but not longevity. Template recommenders will get you early wins, but mature programs require either a vendor feature store or the ability to import your own model scores via API. If your team lacks data science bandwidth, prefer platforms that provide clear export hooks so you can graduate to custom models later without a data migration.

Real-world application: A regional wellness studio used an AI score to pick three classes to surface in its weekly push notification. For users flagged as high churn risk, the system prioritized low-capacity classes and an incentive offer; for active users it suggested a premium workshop. The studio phased the feature by running a 30-day holdout to confirm incremental rebookings before scaling the feed across all locations.

Important: model coverage beats marginal precision early. A modestly accurate model that scores 80% of your active profiles will usually produce more impact than a highly precise model that only covers 10%.

Operational metrics to require during procurement: model coverage (percent of active profiles scored), end-to-end inference SLA (p95 latency), feature transparency (top contributing features per prediction), and drift detection cadence (how often the vendor surfaces degraded performance). Also confirm export APIs so you can archive scores and run offline audits.

Pitfalls teams miss: vendors often conflate personalization with dynamic content insertion. Personalization should change the proposition, not just the name token in an email. Also test cold-start handling for new customers and low-activity users — a fallback rule set must be explicit and measurable, otherwise high-value profiles will be ignored.

If you want working examples and implementation guides, review vendor case notes on model explainability and scoring Braze resources and explore engineering-focused writeups on messaging primitives at the Twilio blog. For a hands-on feature map tailored to membership businesses see Gleantap features.

Next consideration: before you let a model control promotion allocation, define guardrails for spend and customer experience — set frequency caps per customer, require a human-review path for high-cost incentives, and monitor incremental ROI continuously. That containment is the difference between an experiment that scales and an automated program that blows budget and trust.

4 Journey Orchestration and Automation Builder

Hard truth: a visual journey builder is only useful if it enforces safe, stateful logic for long-running programs. Many vendors sell pretty canvases that collapse when you need month-long branches, backfill, or audit trails; that failure mode creates more manual firefighting than automation saves.

A production-grade automation builder must do four things reliably: maintain per-user state across pauses and re-entries, allow backfill of historical cohorts without duplicating sends, expose the decision path for every message, and let non-technical staff edit low-risk flows while keeping high-risk paths locked. If your team lacks an engineer for daily fixes, favor platforms that separate editable marketing steps from guarded system steps.

How the best customer engagement software should handle journeys

Expect event-triggered flows that can run for 12 months or more, with conditional branching based on real-time profile attributes and external signals (payment status, class attendance, membership tier). Practical constraint: long-running journeys need snapshotting and idempotency so edits do not re-run completed steps unintentionally. Ask for a demo of the edit-and-backfill controls during procurement.

- KPIs to validate: average time to deploy a new journey (hours, not days), percent of journeys using automated backfill correctly, send duplication rate after edits (target: near 0%), and retention delta attributable to automated journeys over a 90-day window.

- Operational probes to run in a demo: create a 6-month winback flow, enroll a test cohort, change a mid-flow message, and observe whether the edit triggers duplicate sends or logs a safe-edit event.

- Governance checks: ability to lock steps (billing, cancellations), role-based editing, and an activity feed that shows why a profile exited or branched within a journey.

Trade-off to accept: builders that offer deep control (conditional scripting, custom code actions) require better testing discipline and more engineering oversight. If your goal is speed and low headcount, pick a platform with robust templates and operational guardrails; if you need full control over edge cases, accept the overhead of a sandbox and release process.

Real-world use case: A regional fitness operator implemented a staged onboarding flow that begins at first booking, waits 3 days for attendance, then branches: attendees get upsell messages; no-shows enter a reengagement sequence with a single incentive. The team used safe-edit mode to tweak messaging after two weeks and relied on the platform’s backfill to apply the update only to profiles still mid-journey — preventing duplicate incentives and preserving margins.

Most teams misunderstand backfill: it is not a free way to retroactively send the same campaign to everyone. Backfill must be scoped by state, time window, and suppression rules. If a vendor treats backfill as a bulk-send button, that is a red flag.

- Implementation tip: catalog your core lifecycle flows first (onboarding, engagement, payment failure, churn prevention) and instrument the exact event and profile attributes each flow requires.

- Testing tip: run journeys in a staging workspace with the same data cadence and use holdouts so you can measure incrementality before scaling.

- Integration tip: ensure the journey engine consumes events with sub-minute latency for time-sensitive paths like payment retries and missed-appointment nudges.

Design journeys for reparability: require idempotent actions, visible decision logs, and a rollback path so a failed automation can be fixed without re-traumatizing customers.

During demos, demand a live scenario: push a payment-failure event, confirm the journey pauses while billing is retried, then simulate a successful retry to see the flow continue. If the vendor cannot run this end-to-end in the demo, budget time for a POC.

If you want concrete templates for membership lifecycles, review vendor examples from Braze Canvas and Iterable workflows, test Salesforce Marketing Cloud Journey Builder for enterprise-grade governance, and compare how Gleantap features implement guarded templates for fitness and wellness programs. The final decision is about matching operational maturity: pick the level of control your team can maintain consistently.

5 Real-time Analytics, Attribution and Experimentation

Straight to the point: fast event streams are only useful when you can turn them into directional decisions and measurable dollars. Real-time ingestion without an experiment and attribution discipline turns dashboards into noise and wastes marketing budget.

Why this matters now: modern campaigns act on seconds — a missed payment alert or a last-minute class reminder only works if the data and decisioning are live. At the same time, channel proliferation makes naive last-touch metrics misleading. You need both low-latency signals and a framework that proves which actions actually move retention or revenue.

How the best customer engagement software supports experiments

Platforms that earn the label best customer engagement software combine three capabilities: sub-minute event availability, built-in split testing and holdouts, and cross-channel attribution that links exposures to outcomes. Do not accept a vendor that only exports logs for offline analysis; you need the experiment engine and attribution logic close to the orchestration layer so you can run rapid iterations and trust the results.

| KPI | What to measure and why |

| Experiment detection time | Time from deployment to a statistically actionable signal. Shorter windows enable faster pivots, but watch for false positives when volumes are low. |

| Incremental lift | True improvement vs holdout, not relative CTR. Use holdouts to measure whether a campaign created net conversions or simply shifted timing. |

| Cross-channel contribution | Proportion of conversions attributable to each channel after controlling for exposure sequencing. Prefer algorithmic or mixed models over naive last-touch. |

| Attribution latency and completeness | How long after an event the platform will reconcile exposures to conversions and what percent of conversions it can link across devices and sessions. |

Practical trade-off: real-time attribution and experimentation increase compute and storage costs and require stricter event hygiene. If you try to detect small lifts on low-volume segments in real time, you will either run underpowered tests or chase noise. Prioritize near-real-time signals for high-frequency actions and batch robust experiments for low-velocity outcomes.

Concrete example: a retail chain rerouted flash-sale spend mid-day after a real-time experiment showed email converted better for loyalty members while paid social worked for new prospects. They used a 24-hour holdout to verify incrementality, shifted budget automatically, and captured the outcome to their product analytics tool for post-mortem. That operational loop required both the experiment primitives in the engagement platform and integration with Amplitude for deeper funnel analysis.

Common mistake to avoid: vendors often present multi-touch models as fact. In practice, algorithmic attribution is sensitive to missing identifiers and cross-device gaps. Treat model outputs as directional and validate them with randomized holdouts before using them to reallocate significant budget.

Require a demo where the vendor runs a live A/B with a holdout and shows the end-to-end timeline: event ingestion, decisioning, delivery, and attribution reconciliation. If they cannot produce that in a POC, assume the platform will add weeks to your learning cycle.

Next consideration: instrument canonical conversion events up front, keep experiments simple and well-powered, and demand exportable raw results so your finance or analytics teams can audit claims. For implementation templates and integration notes see Gleantap features.

6 Behavioral Segmentation and Lifecycle Management

Hard fact: you will not increase retention reliably by spraying offers at demographic buckets. Behavioral segments that update from live events are the mechanism that turns first-party signals into timely interventions that can actually change customer behavior.

Operational value: treat segmentation as both a measurement lens and an activation primitive. Segments must be queryable, actionable across channels, and anchored to persistent lifecycle stages (for example new, active, at-risk, lapsed) so your campaigns can apply different business rules and experiments against each stage.

How to vet behavioral segmentation in the best customer engagement software

Key metrics to request during procurement: ask vendors to show live numbers for segment evaluation latency (time from event to segment membership change), segment coverage (percent of your active base eligible for dynamic segments), and signal-to-action lift (measured improvement in the target KPI after a segment-targeted flow). Also demand exportable cohort retention curves so you can compare lifecycle stage performance over time.

Practical trade-off: highly granular, dozens-of-micro-segments look sophisticated but create testing and operational problems. Small segments reduce statistical power, increase churn in audience composition, and multiply activation rules across channels. Start with a short list of high-impact behavioral definitions and treat further granularity as a later optimization once you can measure incremental lift.

- Demo checks for every vendor: Can segments be defined on live event windows (for example, no app opens in 7 days AND last booking > 30 days)?

- Activation scope: Are dynamic segments immediately available to all channels (SMS, email, push, web) or do some channels require exports?

- Edit safety: If you change a segment definition, does the system support backfill controls and show which profiles will be added or removed before actions fire?

Concrete example: a regional fitness operator created an at-risk-7 segment that combined 7-day inactivity, a recent missed class, and a decline in app engagement. When a member entered that segment the platform immediately ran a prioritized sequence: an in-app nudge, an SMS reminder, then a coach outreach if no response. The team validated impact with a 30-day holdout and observed an increase in rebookings among the treated group.

Integration reality: some platforms compute segments on query-time (fast for ad-hoc analysis) while others evaluate membership continuously (fastest for triggers). Continuous evaluation is superior for time-sensitive flows but costs more in compute and may require event-hygiene discipline. If your use cases include missed-payment or last-minute class rescue, insist on sub-minute evaluation.

Implementation tips that matter: standardize event names (booking.created, payment.failed, class.attended), create a concise catalog of 6-8 lifecycle segments to start, and maintain a mapping document that ties each segment to the downstream journey and KPI to avoid orphaned audiences. Make sure segments are surfaced in the UI and via API so operations and analytics teams can both use them without re-creation.

Do not confuse behavior-derived segments with static lists. Dynamic segments must be auditable, triggerable, and testable. Require the vendor to run a live segment change during the demo and show which messages are scheduled as a result.

Vendor signals to prefer: live activation across channels, explicit lifecycle stage support, backfill controls, and clear costs for continuous segment evaluation. For data-layer and segment feeding, see Segment and for practical orchestration examples check Braze resources. For vertical-specific lifecycle templates se eGleantap features.

7 Integrations, APIs, and Data Portability

Key point: Integration capability is a gating factor — the platform either becomes the connective tissue for your business or it creates a second silo. Evaluate APIs and connectors as operational features, not optional extras, because integrations determine how quickly you can automate lifecycle moments and recover when things break.

What to insist on beyond connector counts

Practical requirement: The best customer engagement software for a B2C operator provides streaming ingestion (webhooks or CDC), SDKs for mobile/web, bidirectional APIs for profile and event updates, and reliable bulk export for archives and audits. Prebuilt connectors save time, but the platform must also let you run a full data sync and expose raw event logs so analytics and finance teams can validate outcomes.

- Operational KPIs to measure: average time to onboard a new data source, webhook delivery success rate, API error and retry rates, and completeness of exported records (fields present / expected).

- Interoperability checks: support for JSON schemas, CDC, SFTP/CSV exports, and the ability to accept third-party model scores via API.

- Governance points: versioned schema support, field-level consent/suppression flags, and documented backup/restore procedures.

Trade-off to accept: Prebuilt, opinionated integrations speed launch but can lock you into a data model. If your business relies on non-standard identifiers (membership id, location codes), prefer platforms that publish schema contracts and let you transform data during ingestion. That reduces future migration friction.

Vendor demo checklist (what to run live)

- Full sync test: Ask the vendor to perform a one-time sync of membership, booking, and payment history and provide a completeness report you can audit.

- Webhook reliability run: Push 200 test events to a temporary endpoint and watch delivery, retries, and failure handling.

- Export and restore: Request a bulk export of a representative cohort, then import it into a staging workspace to confirm field mappings and restore behavior.

- APIs under load: Verify documented rate limits, and request a p95 response-time metric for profile read/write under expected concurrency.

Integration reality check: Lightweight automation tools like Zapier are useful for ad hoc flows, but they are fragile for high-volume lifecycle automation. Use Zapier for proofs-of-concept, not for core billing or churn-prevention paths where missed events cost revenue.

Practical use case: A regional wellness operator validated a vendor by wiring live events from Mindbody, Stripe, and their mobile app during a POC. The initial sync revealed mismatched membership identifiers; the vendor provided a mapping layer and webhook replay capability so missed triggers were backfilled without duplicating communications — saving a week of manual cleanup and preventing incorrect cancellation notices.

Require at least one scheduled full-data export and a tested restore during the POC. Portability is not just a checkbox — it is insurance against vendor failure and a negotiating lever during procurement.

Vendor signals to prefer: public API docs, SDKs, published rate limits, webhook dashboards, and explicit connector support for systems you use (for example Mindbody, Stripe, Shopify, and HubSpot). Tools like Segment or Zapier are useful in the stack, but make sure the engagement platform exposes the raw hooks you need.

Final consideration: prioritize platforms that let you validate a full end-to-end sync and provide exportable raw events — that portability is the single best protection against future migrations or compliance audits.

8 Privacy, Security, and Compliance Controls

Bottom line: Security and privacy are operational features, not optional wrappers. A platform that cannot prove who touched what data, when, and why will slow audits, block campaigns, and expose you to fines and reputational damage.

Practical controls to require from any vendor

- Access governance: role based controls, single sign on (SAML/OIDC), and fine grained permissions so business users can run campaigns without elevated rights.

- Immutable audit trails: searchable, tamper evident logs that show reads, writes, exports, and suppression changes tied to user id and API key.

- Data lifecycle rules: configurable retention, automated archival, and reversible suppression so you can implement retention policies per region or product line.

- Data residency and routing: ability to restrict storage or processing to specific regions to meet local rules and reduce cross border risk.

- Encryption posture: strong encryption in flight and at rest, plus documented key management model and options for customer managed keys if required.

- Right to be forgotten and export: automated workflows to extract or remove an individual record end to end, including third party connectors and backups.

Why this matters in practice: Controls matter because real incidents do not look like worst case movies. They are slow leaks, mis-routed exports, or forgotten test datasets that surface during an audit. Responding fast is what limits cost and customer harm, not promises about future roadmap.

Common procurement failures and how to avoid them

Failure mode: vendors provide high level compliance badges but hide the operational hooks. Do not accept a checkbox SOC 2 statement without the operational details you need to run your business.

- Ask for the playbook: request a documented process for a rights request including SLAs and sample delivered exports.

- Test exports: during a POC, run a full export for a representative cohort and verify deletion or anonymization on the vendor side.

- Simulate an incident: require the vendor to show their alerting and containment steps for a leaked API key or misconfigured connector.

Tradeoff you must accept: stricter controls increase implementation time and sometimes cost. For mid market B2C, pick the minimal set that secures customer trust and supports audits – then automate the rest. Over-engineering for enterprise scale before you need it is the quickest way to stall a rollout.

Concrete example: A regional healthcare provider discovered audit logs missing key export events during an annual review. They paused marketing sends, required the vendor to replay the export and provide cryptographic evidence of deletion for affected records, and negotiated a contractual remediation SLA. The vendor supplied a complete trace within 48 hours, which avoided regulatory escalation and allowed the provider to resume campaigns with a verified suppression list.

Require live evidence during the demo: do not accept screenshots. Ask to run a rights request and a targeted export for a test user so you can verify timing, completeness, and deletion behavior.

Key negotiable items to include in contracts: response SLAs for rights requests, scope of audit access, data return format, destructive delete confirmation, and options for customer managed keys. These are the terms that protect you after go live.

One practical judgment: The single most telling signal of vendor maturity is how they handle edge cases – expired backups, replayed webhooks, or connector errors. If a vendor cant demonstrate tested controls for these events in a POC, expect months of firefighting later.

For concrete documentation and implementation checklists see Gleantap features and high level compliance guidance from Gartner.

Frequently Asked Questions

Straight answer up front: the questions teams ask during procurement separate plausible vendors from the ones that will add months of work and confusion. Focus your queries on measurable outputs (latency, match rates, incremental lift) and on vendor behavior under failure — not glossy feature lists.

How should I use KPIs to compare vendors during demos? Ask for raw, auditable metrics and a short live test. Demand samples for profile update latency, identity match rate, webhook delivery success, and the vendor’s recent example of incremental conversion lift for a similar client. Do not accept spreadsheet averages without the underlying logs or a POC you can run yourself.

Minimum channel set for a mid-market B2C business is pragmatic, not aspirational: require native email and SMS plus either push or web messaging. The key is that the platform must orchestrate routing and suppressions across those channels in one decisioning layer so you do not get duplicate sends or inconsistent suppression behavior.

Can we bring our own ML models? Yes in most mature stacks, but verify the integration pattern. Good vendors accept scored outputs via API or a feature-store import, support server-side scoring hooks, and provide latency SLAs for model-driven decisioning. If you expect sub-minute decisions, confirm p95 inference latency and throughput limits before committing.

Is real-time ingestion always necessary? Not always — it depends on the use case. Prioritize real-time for onboarding triggers, payment failure flows, and last-minute reengagements; accept batch for long-term lifecycle analytics and monthly retention analysis. The trade-off is cost: continuous, low-latency evaluation increases compute and monitoring overhead.

What practical first automation should a fitness or wellness operator implement? Build a compact onboarding path: confirmation at booking, a 72-hour prep tip, a 24-hour reminder, a 90-minute nudge, and a 48-hour post-visit feedback + incentive. Instrument a 30-day holdout to measure incremental rebookings and set a frequency cap to avoid over-messaging.

How do I evaluate data privacy posture in procurement? Request operational evidence: a recent SOC 2 report, a documented rights-request playbook with SLAs, and the ability to run a targeted export & delete during the POC. Screenshots are not sufficient — require live runs so you can time the full workflow end to end.

How long should a POC run and what should it prove? Target 2–6 weeks. The POC must cover a full-data sync, at least one live journey, webhook replay, suppression enforcement, and a small randomized holdout test to validate incremental lift. Use the POC to expose mapping issues and to confirm export/restore behavior — those are the things that block production launches.

Concrete Example: A family entertainment center ran a 3-week POC to validate international SMS routing and fallback. During the test they discovered the vendor’s fallback rule defaulted to a promotional email for certain regions. The team switched to a transactional email fallback, re-ran the test, and avoided a potential spike in spam complaints when they rolled out a summer campaign.

Common procurement pitfall most teams miss: Vendors will quote median numbers that mask tail behavior. Insist on p95 metrics and a replayable event log. If a vendor hesitates to provide logs or to run a live failure scenario in a demo, treat that as a material red flag.

Negotiation levers to include in contracts: export & restore guarantees, rights-request SLAs, documented retention policies, and an exit data pack delivered within a fixed window. These items are cheaper while negotiating than during an emergency.

Practical next steps you can run this week:

- Run a micro-POC: push 500 real events from your booking and POS systems and verify profile materialization and dedupe.

- Test a live journey: trigger a payment-failure flow and confirm suppression, fallback routing, and the activity audit trail.

- Validate ML integration: import a small set of scored profiles or callout a model endpoint and measure p95 latency.

- Execute a rights request: during the POC, request export and deletion for a test user and time the full process.

- Require raw logs: insist the vendor hands over the event stream for a sample period so your analytics team can run independent checks.

Final judgment: vendors that survive these practical probes and deliver clean, replayable logs plus exportable data are rare. Prioritize those operational guarantees over shiny UX features — they are what keep programs stable as you scale.