Choosing the Best Customer Engagement Platforms in 2026 matters more than ever as omnichannel expectations, privacy rules, and SMS driven conversions reshape retention. This ranked, evidence driven roundup compares the top customer engagement tools 2026 with concise reviews, pricing snapshots, and clear recommendations for specific use cases, including an honest assessment of Gleantap. Read on for our methodology, side by side strengths and weaknesses, and a buyer checklist that helps you pick the right platform for local businesses, DTC commerce, subscription services, or enterprise teams.

Methodology and buyer criteria

Plain rule up front: ranking equals decision support, not gospel. We scored platforms to reflect what matters for buyers in 2026 and then applied real-world filters for integration friction, pricing opacity, and channel deliverability.

Scoring framework and weights

Scoring principle: weights are chosen to reward practical capability that drives retention and conversion — deep features and omnichannel orchestration matter most, but integration and predictable pricing determine whether a tool is usable in production.

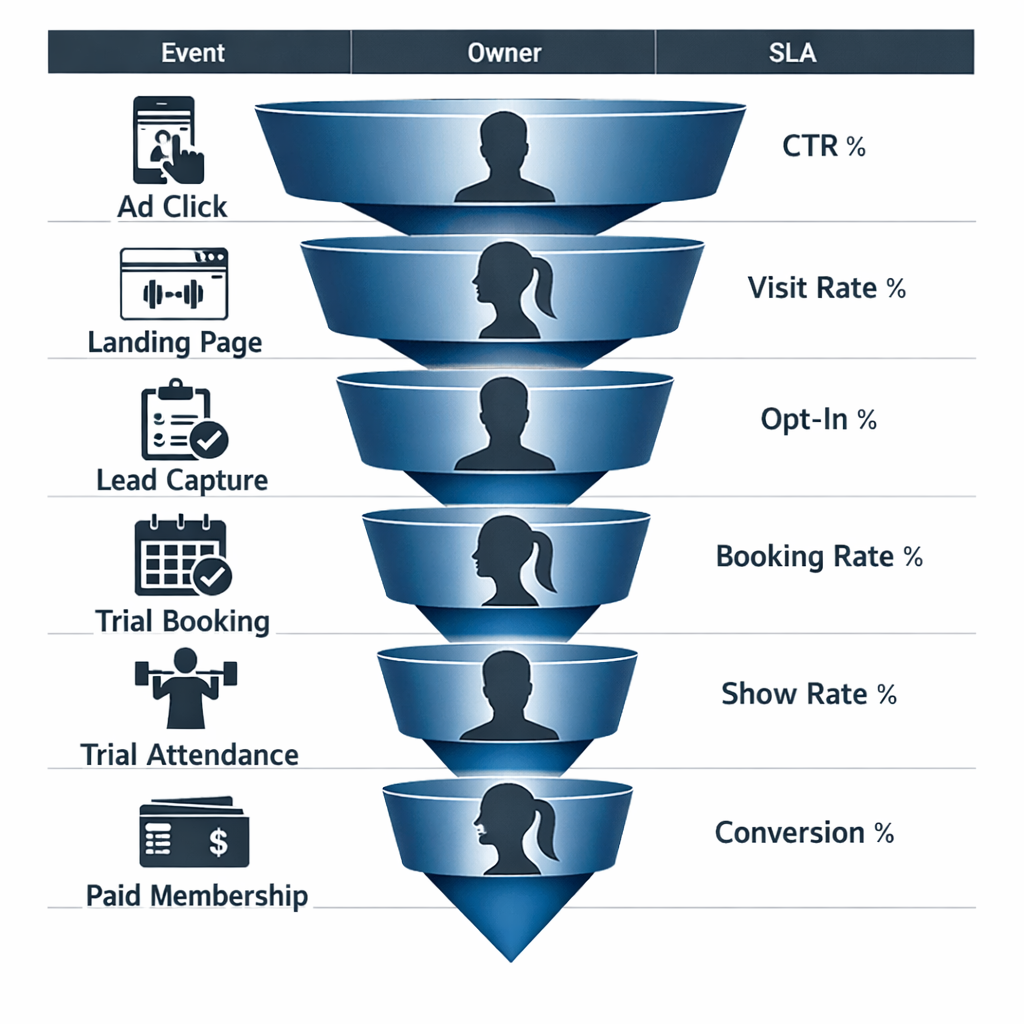

| Category | Weight (%) | Why it matters |

| Feature depth | 30 | Controls whether the platform can handle complex journeys and personalization without heavy engineering |

| Omnichannel support | 20 | Real world retention requires coordinated email, SMS, push, in app, and conversational channels |

| Personalization and segmentation | 15 | Accurate targeting and dynamic content drive LTV improvements |

| Integrations and API | 15 | Determines time to value and whether you can centralize profiles without rebuilding systems |

| Pricing transparency | 10 | Hidden billing models are the single biggest cause of TCO surprises |

| Support and reliability | 10 | Deliverability, SLA, and vendor responsiveness decide whether campaigns actually land |

Practical tradeoff to expect: the framework favors platforms that deliver end to end orchestration out of the box. That penalizes pure API players where you build orchestration on top – which is fine if you have engineering resources but poor guidance for lean marketing teams.

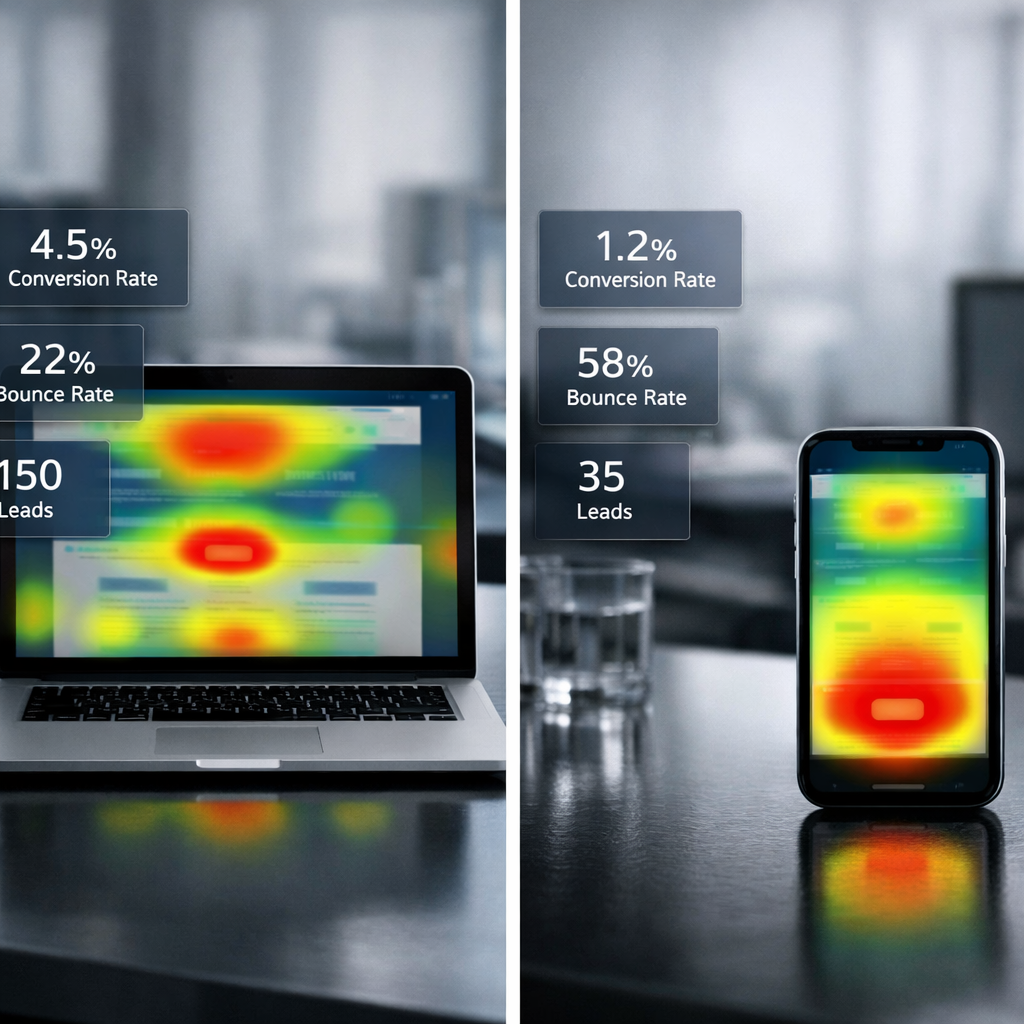

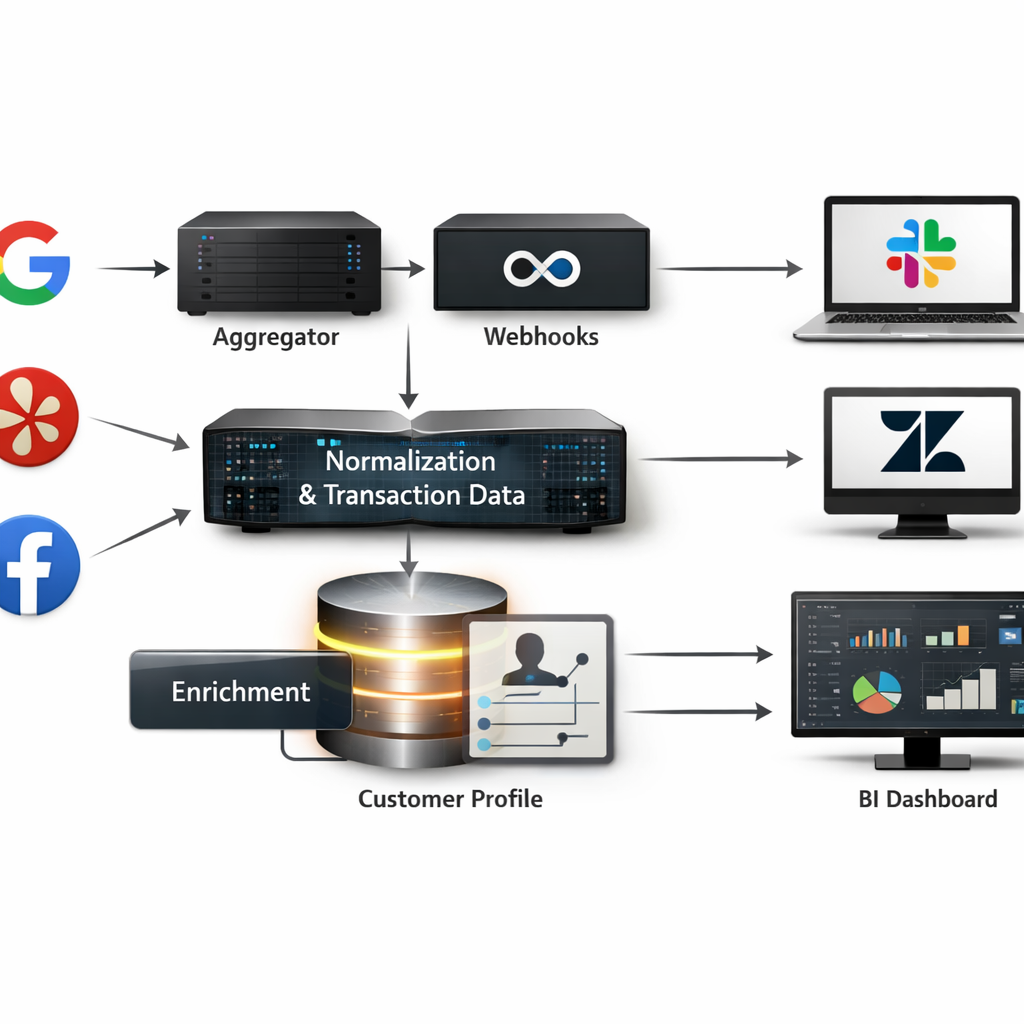

Data sources and validation: we combined vendor docs and pricing pages with aggregated user sentiment from G2 and case studies, then cross-checked feature claims against industry reports like the Forrester Wave and Gartner customer experience guidance. We also drew on operational learnings from SMS tooling and best practices in conversational marketing.

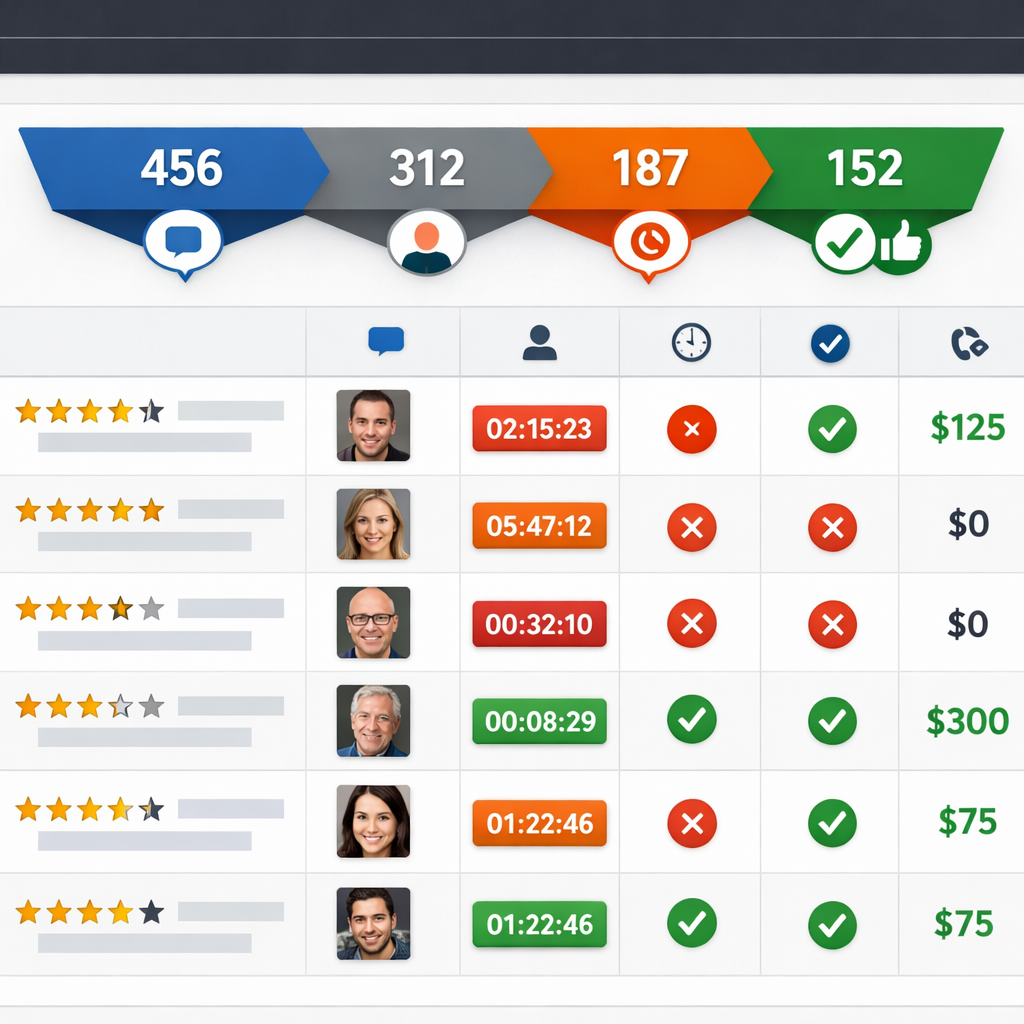

- What we measured in practice: time to first live campaign, number of prebuilt integrations for CRM and booking systems, and clarity of pricing for a 50k MAU scenario

- Where vendor materials fall short: product documentation often omits integration edge cases and queueing/delivery guarantees for high SMS volumes

- Biases to watch for: G2 reviews skew toward recent adopters and happiest customers; Forrester and Gartner reflect enterprise priorities rather than SMB workflows

Concrete example: a 20 location gym chain evaluating platforms will care more about SMS deliverability, prebuilt booking integrations, and pricing by message volume than enterprise experimentation features. Under our scoring that business should weight Omnichannel support and Pricing transparency higher than the default – run a 30 day proof of concept that measures delivered SMS rates, appointment fill uplift, and real billing for message volume.

How to use the ranking: use the scores to shortlist 2-3 vendors, then run the same acceptance tests across each: import 5k profiles with consent records, trigger 3 production flows (reminder, churn prevention, promo), and request a contractual pricing scenario for your monthly active contacts and outbound SMS volume. If a vendor refuses to provide this level of transparency, treat pricing and deliverability claims as unverified.

Key takeaway: weighting and real-world tests matter more than vendor marketing. Match the scoring emphasis to your team – if you are engineering heavy, lean toward API-first vendors; if you are retention focused with limited engineers, prioritize platforms with built in SMS workflows and clear message pricing like those compared here.

1. Gleantap

Short verdict: Gleantap is an SMS-first, omnichannel tool built for appointment-based and local businesses that want fast time-to-value on retention and bookings rather than enterprise-grade experimentation or full data-science personalization.

Standout features and where they matter

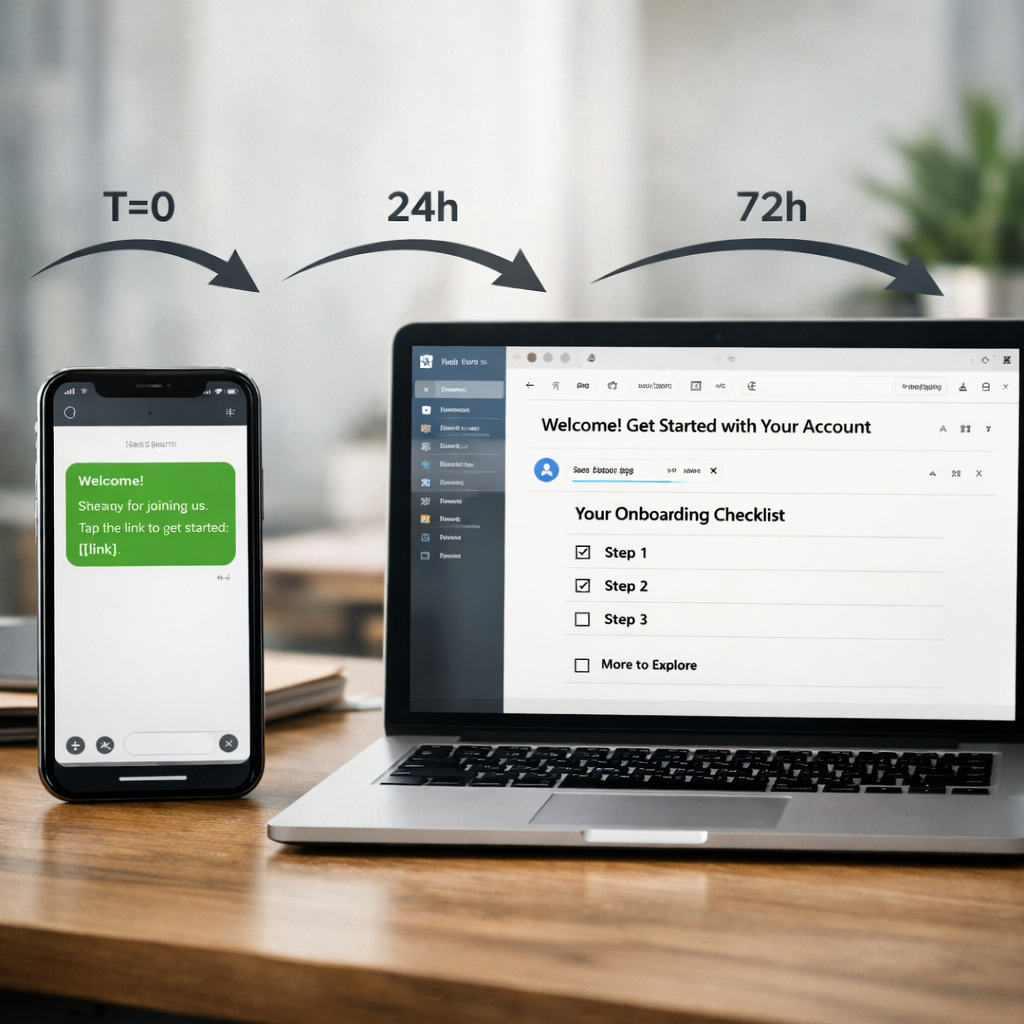

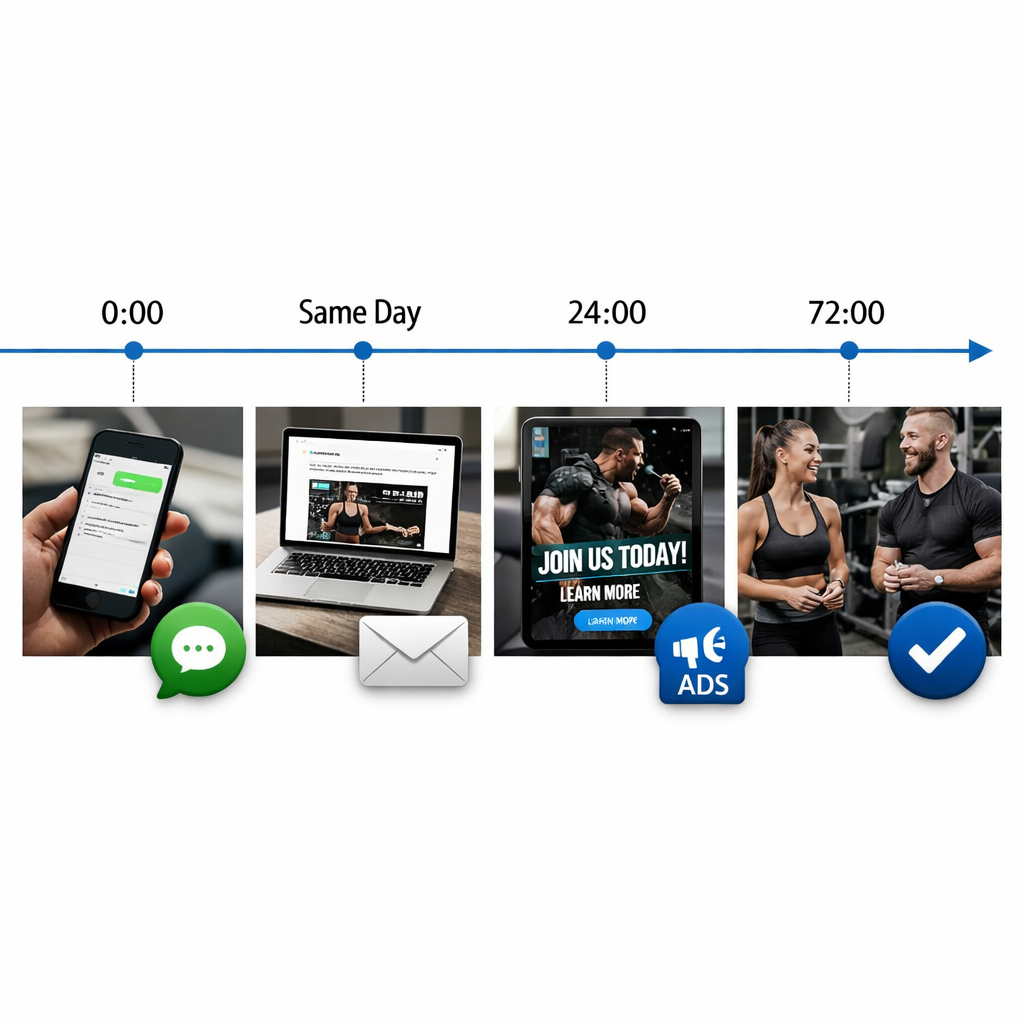

- Prebuilt SMS automation templates: reminders, confirmations, re-engagement and churn recovery flows tuned for gyms, salons, clinics, and other appointment businesses.

- Opt-in optimization guidance: in-product copy and timing suggestions to maximize compliant consent capture and improve deliverability.

- Booking and CRM integrations: out-of-the-box connectors for common scheduling systems plus Zapier for simpler two-way syncs without heavy engineering.

- Message-level analytics: delivery, open and response rates focused on SMS health rather than complex predictive modeling.

Practical tradeoff: An SMS-first posture gives immediate engagement gains but shifts responsibility to the buyer for strong consent management and carrier compliance. Gleantap reduces the work by providing opt-in templates and guidance, but you still need documented consent records and careful messaging cadence to avoid carrier filtering.

Concrete example: A 10-location fitness studio uses Gleantap to automate class reminders, waitlist notifications, and a 7-day rebook drip after a missed session. Within 30 days the studio stops manually calling no-shows, reduces admin time, and sees noticeably higher rebooking because messages arrive at the decision moment. This is a typical rapid-win scenario where templates and booking integrations matter more than complex audience scoring.

- Pros: fast setup for appointment workflows, cost-efficient SMS bundles for local volumes, clear pricing tiers, strong deliverability practices for US carriers.

- Cons: limited advanced A/B testing and experimentation compared with Braze or Iterable, fewer built-in email capabilities, and smaller support for global SMS complexity (short codes, local sender IDs) than Twilio.

Judgment: Choose Gleantap when your priority is operational improvement — reducing no-shows, increasing repeat visits, or automating time-sensitive customer messages — and you lack engineering bandwidth. Do not choose it if your roadmap depends on multi-touch, cross-channel experimentation tied to a data warehouse or if you need enterprise-grade identity stitching and predictive models.

Integration note: If your business relies on real-time booking updates, insist on two-way sync during the demo. Ask the vendor to show a live cancellation flow that updates customer profiles and suppresses outbound reminders. If you need global scale or custom routing, compare Gleantap against Twilio’s programmable messaging and the carrier controls described in the Twilio conversational marketing guidance: Twilio Conversational Marketing.

Key takeaway: Gleantap wins for local, appointment-driven operations because it converts the SMS channel into operational automation quickly. If your KPIs are bookings, attendance, and short-term retention, try a pilot focusing on reminders + re-engagement flows and measure lift in rebook rate and admin hours saved.

2. Intercom

Straight verdict: Intercom is the best practical choice when your primary goal is conversational product experiences and in‑app support, not large scale cross channel marketing orchestration. It wins on real time conversation tooling and product‑led flows, and loses on cost and deep multi touch journey flexibility.

Key capabilities and how they matter

Standout features: Intercom combines a fast live chat inbox, Custom Bots, and Product Tours with outbound message capabilities tied to user events. The platform is optimized for real time help, contextual in‑product messages, and lightweight targeted campaigns initiated from product usage signals.

- Real time conversation: best in class UX for live agents and asynchronous chat that keeps support friction low

- Product Messaging: Product Tours and in‑app banners let product teams drive activation without separate email sequences

- Automation for support: Bots and routing rules reduce agent load and speed response times

- Limits for large marketing plays: not engineered for complex multi channel canvases or heavy SMS first lifecycle programs

Pricing snapshot: Intercom mixes per seat charges, active user/contact components, and add ons for advanced features. Expect total cost to scale quickly as you add seats and expand outbound messaging. Ask for a line item scenario for your monthly active users, expected message volume, and number of seats rather than relying on list price.

Integrations and ecosystem: Intercom connects to major CRMs and CDPs through native integrations and Zapier, but many teams rely on a secondary vendor for SMS or heavy email orchestration which adds operational complexity and hidden TCO. See independent market context in the Forrester Wave.

Practical tradeoff that matters: Intercom reduces support friction and improves in‑product conversion, but buyers often underestimate the cost of extending it into omnichannel marketing. If you need granular experimentation across email, push, and SMS with enterprise scale segmentation, Intercom forces either complex workarounds or additional vendors.

Concrete Example: A mid‑market SaaS replaced a standalone helpdesk with Intercom to consolidate onboarding and support. They used Product Tours for first‑time user flows and Custom Bots to capture qualification details before routing to CSMs. The result was a noticeable drop in repetitive tickets and faster handoffs for high value accounts, achieved without new engineering work.

Common misjudgment: Teams assume Intercom is a plug and play marketing automation substitute. In practice its segmentation and orchestration are intentionally product and support focused. Trying to stretch it into heavy lifecycle marketing usually creates duplicated data flows and higher costs than selecting a purpose built orchestration platform.

Key takeaway: Use Intercom when conversational UX and in‑app engagement are primary. If you expect to own broad omnichannel journeys or heavy SMS volume, evaluate platforms built for marketing orchestration or pair Intercom with a dedicated engagement tool and budget for integration overhead.

Next consideration: If you are product led and need tight support to product handoff, shortlist Intercom and request a pricing scenario for your expected seats and MAUs. If your roadmap emphasizes multi channel campaigns, include Braze or Iterable in the same RFP to compare true orchestration capabilities and end to end TCO.

3. Braze

Clear position: Braze is the platform you pick when you need real time orchestration, fine grained personalization, and analytics at scale across mobile and web. It is not the cheapest or the fastest to stand up, but when your growth team needs coordinated cross channel campaigns powered by clean event data, Braze delivers predictable outcomes.

Standout capabilities

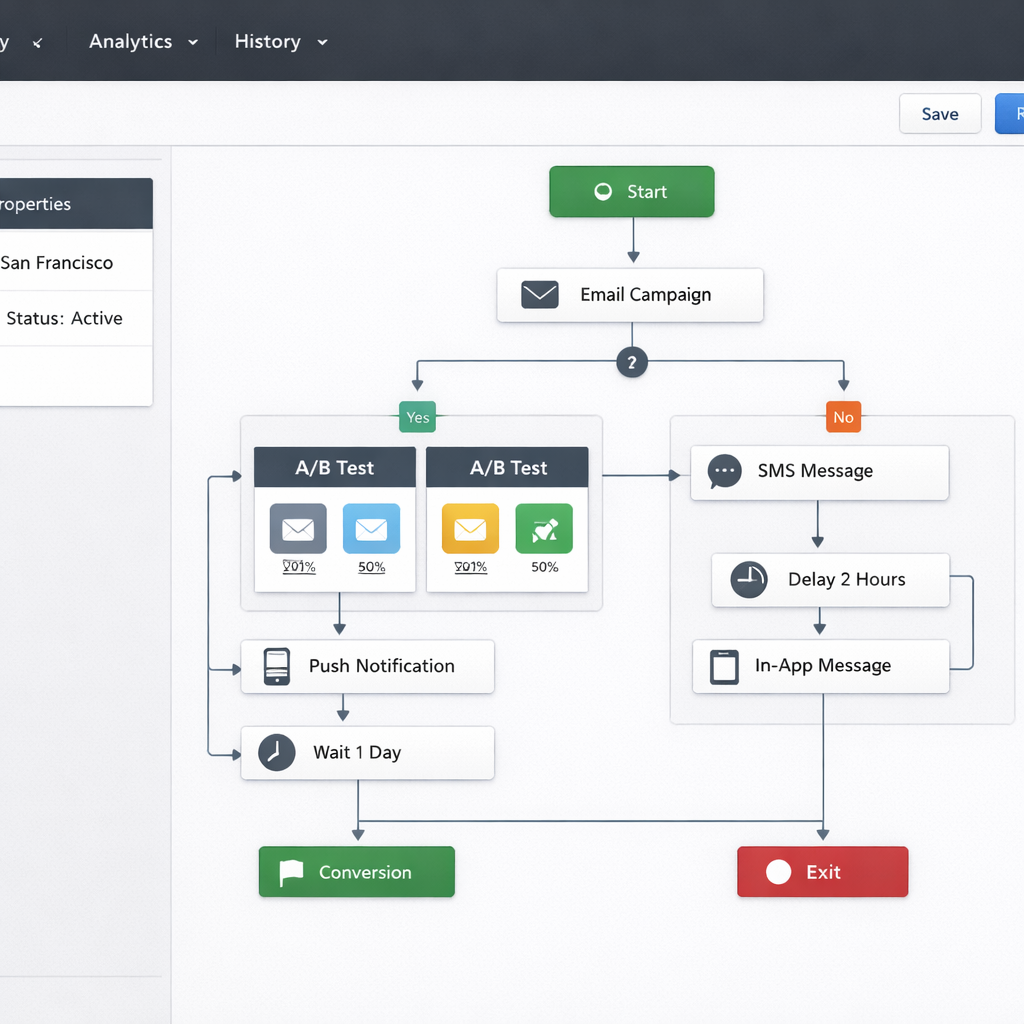

Core strengths: Canvas orchestration for composing multi step journeys, advanced segmentation with behavioral windows, and runtime personalization templates that scale across email, push, in app, and SMS via partners. Braze ships good exportable analytics and native hooks to CDPs and warehouses such as Snowflake and mParticle, which keeps data fidelity high for real time decisioning.

- Advanced orchestration: Canvas lets you build conditional multi channel flows with event triggers and wait logic.

- Data-first personalization: Works best when fed by mature data pipelines from Snowflake, Segment, or mParticle.

- Enterprise analytics: Cohort, funnel, and experimentation features that tie messaging performance back to retention metrics.

Tradeoff to plan for: Braze expects data maturity and engineering involvement. If your team lacks reliable event streams or a CDP, you will pay for implementation and consulting time. That cost is real and often exceeds licensing for early projects.

Practical limitation and TCO nuance

Pricing reality: Braze uses enterprise contact models and custom quotes. That yields flexible scale but opaque total cost. Watch for billed active profiles, message channel add ons, and fees for deliverability services. For SMS specifically, Braze often routes through integrations or partners which can add per message fees – check whether carrier costs are included.

What teams get wrong: Many buyers assume Braze will reduce engineering work because it has connectors. In practice you still need data engineering to produce consistent user events and identity stitching. Expect a 2 to 6 month onboarding window for sophisticated use cases.

Concrete use case

Concrete example: A mobile gaming studio uses Braze to run level completion journeys that trigger a push, then an in app message, and finally a personalized email if the player did not return within 48 hours. Event data flows from Snowflake into Braze and templates populate game specific rewards, lifting 7 day retention by measurable points while enabling A B testing on incentive levels. This is a high return scenario where precise segmentation and speed matter.

Another realistic application: A retail brand uses Braze to A B test product carousel content across email and push, merging product recommendation output from a CDP with Braze templates to increase repeat purchases. The integration complexity is worth it when average order value gains exceed implementation cost.

- When to pick Braze: Enterprise and high growth consumer apps that already have CDP or warehouse investments and need sophisticated personalization and experimentation.

- When to avoid Braze: Small teams or local businesses that prioritize fast time to value, low engineering cost, and transparent per message pricing.

Verdict – Best for enterprise and high growth consumer apps that need robust personalization and analytics. Budget and data maturity are decisive factors.

Ask this in demos: Request a pricing scenario that matches your expected monthly active profiles, message mix by channel, and whether SMS is billed separately. Also ask how identity stitching is counted in active profile calculations.

4. Klaviyo

Core position: Klaviyo remains the dominant choice for merchants who measure platform value in direct revenue per message — it is the easiest path from Shopify product data to revenue-driven email and SMS flows in 2026.

What Klaviyo does best

Commerce-first automation: Klaviyo converts order, product, and browse events into ready-made lifecycle flows and revenue reports faster than most competitors. If you run a DTC store on Shopify or BigCommerce, Klaviyo will get you to meaningful win-back and cart recovery programs with minimal engineering.

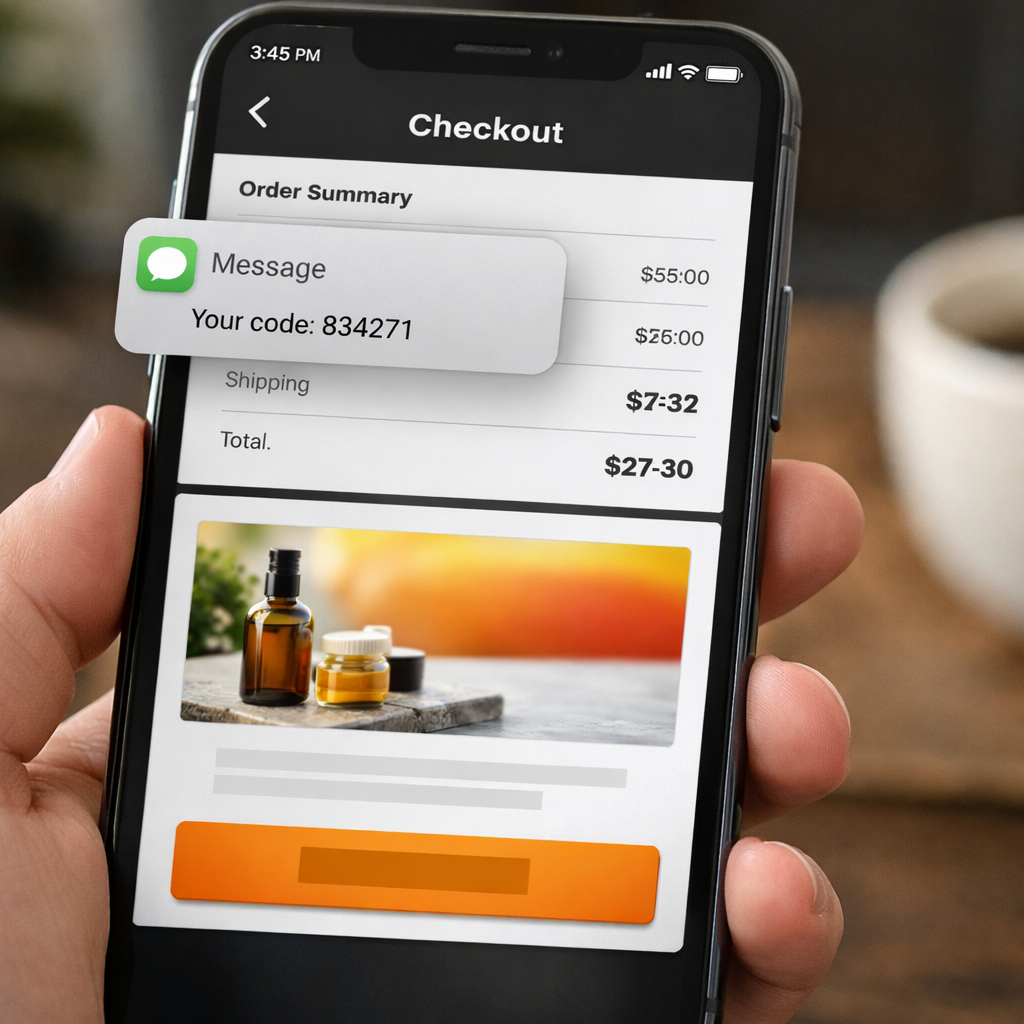

Email and SMS pairing: Klaviyo bundles SMS with email templates and shared segmentation so you can test channel mixes without stitching two vendor stacks. That integrated billing and templating is why many merchants treat Klaviyo as their full stack for lifecycle campaigns.

Limitations and tradeoffs you must accept

Not built for heavyweight orchestration: Klaviyo shines on commerce flows but shows limits when you need complex cross-product journeys, real-time decisioning across dozens of touchpoints, or enterprise-grade experimentation. Expect to hit the ceiling if you need deep CDP-style identity stitching or orchestrations that span product, support, and billing systems.

Pricing friction: Klaviyo charges mainly on contact list size with SMS credits layered on top. That model rewards revenue-focused pruning but punishes large, low-activity lists. Practical consequence: without aggressive list hygiene and suppression you’ll pay substantially more as your CRM churns.

- Standout features: Rapid Shopify sync, prebuilt commerce flows, visual flow builder, revenue attribution reports

- Best fit: DTC brands, subscription boxes, and small chains that need fast, revenue-focused automation

- Not ideal for: Enterprises requiring advanced omnichannel orchestration, heavy CDP workloads, or complex consent workflows

Integration specifics: Klaviyo integrates tightly with Shopify, Magento, and common analytics tools; use Segment or your data warehouse only if you need to centralize profiles outside Klaviyo. If your product catalog or order events are custom, expect engineering work to maintain clean, normalized events.

Concrete example: A mid-market apparel merchant on Shopify used Klaviyo to implement a combined cart-abandon email plus SMS sequence and a post-purchase replenishment flow. Within six weeks the new flows accounted for noticeably faster recoveries and clearer revenue attribution in Klaviyo reports — the team reduced manual promo emails and could target high-value buyers using product-level segmentation.

Practical insight: Teams often treat Klaviyo as a catch-all and import every contact to maximize reach. That backfires. Maintain selective active lists, use event-triggered segments, and archive stale contacts to keep costs down and improve deliverability.

| Category | Snapshot |

| Pricing model | Contact-based tiers + SMS credits |

| Channels | Email, SMS, basic push via integrations |

| Top integrations | Shopify, BigCommerce, Magento, Segment |

| Strength | Commerce automation and revenue analytics |

| Weakness | Limited enterprise orchestration and CDP features |

Key takeaway: Choose Klaviyo when rapid, revenue-measured email + SMS automation for commerce is the priority. If you need cross-product orchestration, strict data governance, or to minimize active-profile costs at scale, evaluate CDP-first or enterprise orchestration platforms alongside Klaviyo.

Next consideration: During evaluation ask for a pricing scenario that mirrors your active contacts after suppression, and review how Klaviyo will import consent records and unsubscribe history from your checkout and booking systems.

Judgment: Klaviyo is the safest bet for e-commerce teams that want revenue-first automation without building pipelines. It becomes the wrong choice when your roadmap requires enterprise data unification, advanced cross-channel decisioning, or a pricing model optimized for very large but low-activity audiences.

5. Iterable

Straight answer: Iterable is the platform to pick when your marketing team needs powerful journey orchestration, flexible data modeling, and built in experimentation without committing to the complexity of a full enterprise stack.

Standout features

- Journey orchestration: visual canvas for multi step, conditional paths across email, push, in app, and SMS endpoints.

- Data model flexibility: supports rich event schemas and custom profile attributes to drive segmentation and personalization.

- Experimentation built in: A B and multivariate testing at branch or message level so tests run inside journeys.

- Real time event ingestion: low latency triggers for time sensitive flows when your stack feeds clean events.

Key tradeoff: Iterable rewards teams that have reliable event data and a CDP or data warehouse. Without clean events and defined identity stitching, the platform becomes a heavy campaign manager rather than a true real time orchestrator.

Pricing, integrations, and vendor posture

Pricing snapshot: contact based pricing with enterprise tiers and optional deliverability or managed services addons. Prepare for higher TCO than SMB oriented tools when you enable advanced features and extra channels.

Integrations: prebuilt connectors for Segment and major CDPs, native links to data warehouses, and common analytics tools. For teams that prioritize first party data and CDP driven personalization, Iterable plays well with the stack buyers prefer in 2026 – see the Forrester Wave for cross channel campaign management for market context Forrester Wave.

Operational consideration: implementations frequently require mapping event schemas, building identity resolution, and dedicating engineering time to real time webhooks. Expect a 6 to 12 week implementation for mid complexity use cases.

Concrete example

Concrete Example: A subscription ecommerce brand used Iterable to run a post purchase onboarding journey that combined email, push, and SMS. Engineering pushed purchase and shipment events into Iterable via the data pipeline, marketing created an A B test on onboarding subject lines inside the journey, and the team measured LTV lift at 30 days. The result was measurable improvement in first repeat purchase, but the project required upfront event cleanup and an extra QA cycle to validate identity joins.

Iterable gives marketing teams control and experimentability at scale, but that control is only as good as the quality of event data feeding the system.

Ask Iterable during a demo about real time event latency, test environment support, and how identity stitching works with your CDP or data warehouse. These are the practical items that determine success or long delays in time to value.

Who should pick Iterable: Teams that run multi touchpoint customer journeys, prioritize built in experimentation, and can commit engineering time to set up a reliable event stream. If your priority is fast SMS first workflows for local businesses, consider a specialist like Gleantap instead.

Next consideration: Before shortlisting Iterable, audit your event quality and identity strategy. If those are weak, you will pay for power you cannot use; if they are solid, Iterable scales experimentation and cross channel orchestration cleanly.

6. Customer.io

Direct point: Customer.io rewards discipline. If your product teams send clean event streams and you need precise, event triggered workflows, Customer.io delivers control and reliability that generalist tools do not match.

Core strengths

Event first architecture: Customer.io is built around events and attributes rather than static lists. That makes it excellent for product driven lifecycle messaging where triggers matter more than static cohorts.

- Fine grained segmentation: Build rules off custom events and attributes for highly targeted flows

- Developer friendly API and SDKs: Easy to push new event types or enrich profiles programmatically

- Reliable delivery for transactional email and triggered messages: Good for onboarding, billing, and behavior based alerts

- Flexible data integrations: Native connectors for Segment and Snowflake and robust webhook support

Concrete example: A mid market SaaS product uses Customer.io to run an onboarding sequence that changes based on user events. When a user completes a key action the product triggers an event, Customer.io moves the user to a new path, and subsequent messages adapt to real time behavior. That setup reduced time to activation by two weeks for one customer in 2025.

Practical trade offs and implementation notes

Reality check: Customer.io is not a plug and play commerce stack. Teams without a stable event model or without engineering support face a steep setup cost and ongoing maintenance overhead.

- Pricing trap to watch: Active profile pricing plus heavy event ingestion can inflate TCO if you send many high cardinality events

- UI learning curve: The workflow builder is powerful but requires attention for complex branches and retry logic

- Channel coverage: Email and event triggered messages are core strengths; for heavy SMS or omnichannel experimentation you will need to compare TCO and native feature parity versus alternatives

Common misread: People assume event driven equals low effort. In practice the platform amplifies good data and punishes messy identity stitching. If profiles are fragmented your targeting will be noisy and deliverability and measurement suffer.

Key takeaway: Choose Customer.io when you can instrument consistent events and want developer level control over lifecycle messaging; avoid it when you need white glove commerce templates or minimal engineering involvement.

Integration note: Customer.io plays well with CDPs and warehouses. If you want unified profiles and advanced analytics connect to Snowflake or your CDP and use the webhook layer for custom syncs. See industry context in the Forrester Wave and user sentiment on G2.

Who should trial it: Product and growth teams at mid market SaaS that run event heavy lifecycle programs and have engineering bandwidth to maintain a clean event model.

Takeaway: If your organization can enforce a single event taxonomy and tolerate an initial engineering investment, Customer.io gives the most precise event driven control for lifecycle messaging among the leading customer engagement platforms in 2026; otherwise evaluate solutions with stronger out of the box commerce or SMS bundles such as Kleaviyo or Gleantap and compare pricing for your expected active profiles and message volumes.

7. Twilio

Direct assertion: Twilio is not a turnkey engagement platform – it is a communications platform built from primitives. For teams that want absolute control over messaging, global reach, and programmable integrations, Twilio is unmatched. For teams that need ready-made marketing workflows, segmentation UI, and low engineering overhead, Twilio adds significant build and operational work.

What Twilio gives you and what it does not

Strengths: Twilio provides flexible APIs for SMS, MMS, voice, WhatsApp, and email via SendGrid, plus a Conversations API to thread multi-channel dialogs. It has superior global phone number coverage, carrier-level deliverability tools, and a large partner ecosystem including Segment. These primitives let engineering teams build custom engagement flows and integrate deeply with backend events and CRM systems.

Limits and tradeoffs: Twilio expects you to assemble orchestration, consent management, templates, analytics dashboards, and campaign UIs. Managed products or partner layers exist but increase cost. Expect ongoing work for carrier compliance – US 10DLC registration, short code provisioning, and conversation logging – which can be operationally heavy and add monthly fees beyond simple per-message pricing.

| Capability | Practical implication |

| Programmable messaging APIs | Maximum flexibility to create nonstandard flows but requires engineering to build UI and rules |

| Global phone numbers and delivery controls | Strong for international scale and transactional reliability; carrier registration work is required |

| Pay as you go pricing | Predictable at low volume but TCO rises when you add registrations, dedicated numbers, and compliance services |

| No built in marketing orchestration UI | Marketers will need a partner product or custom tooling for segmentation, A B testing, and campaign scheduling |

Concrete example: A European logistics startup used Twilio to power delivery notifications across SMS, WhatsApp, and voice. Engineering implemented the Conversations API to keep driver and customer threads in a single view and integrated webhooks with their dispatch system for real time status updates. The result was a smooth multi-channel user experience, but the team spent three months building templates, consent tracking, and carrier registrations before the program reached steady state.

- Practical insight: If your priority is a custom communication stack and you have engineering bandwidth, Twilio lowers technical limits and supports global scale. Do not underestimate the product and ops work required to turn those primitives into a full featured engagement platform.

- Cost consideration: Twilio message pricing can look cheaper than bundled platforms at first glance. Factor in phone number fees, short code or toll free costs, 10DLC registrations, and the cost of building compliance and campaign tooling when estimating TCO.

- Integration advantage: Use Twilio when you need deep backend integrations, programmatic control over message routing, or to connect to legacy telephony systems. If you need fast marketing experiments and out of the box segmentation, prefer a higher level platform or a Twilio partner.

Key takeaway: Twilio is the right choice when control, global delivery, and programmable flexibility matter more than speed to value. For marketing led teams with limited engineering resources, choose a managed engagement platform or a Twilio partner to avoid hidden operational costs.

For further reading on conversational use cases and implementation patterns see Twilio resources on conversational marketing at Twilio Blog. If your priority is SMS first workflows for local businesses where time to value matters, compare Twilio against platforms built for that use case such as Gleantap.

Frequently Asked Questions

Short answer first: pick the platform that solves your primary business constraint, not the one with the longest feature list. For many small and mid market teams that means prioritizing delivery, predictable pricing, consent management, and speed to value over advanced experimentation suites.

Direct, practical answers

- What will actually drive cost up after purchase: vendor meters. If pricing is per active profile your bill grows with the size of your audience even if engagement is infrequent. If pricing is per message you pay for spikes. Build a simple 12 month model with realistic churn and campaign frequency and ask the vendor to run the numbers against it.

- How to judge deliverability claims: ask for sender reputation data and recent campaign performance for comparable verticals. Vendors that operate their own carrier relationships are easier to troubleshoot for high volume SMS than API only providers.

- Can I keep consent and message history when I leave: yes but only if you enforce data export terms before buying. Require exportable consent logs, timestamps, and message receipts in your contract and validate an export in your proof of concept.

- Will AI personalization fix low engagement: not by itself. AI helps scale template variants and subject lines, but if your data model is fragmented personalization amplifies noise. Fix unified profiles first, then apply AI driven variants.

- How much engineering is required: expect a gap between demo and production. Products marketed as no code still need webhooks, data pipelines, and consent sync. Budget at least a couple of sprints for reliable production integrations.

If your team lacks engineering capacity, choose a platform with prebuilt booking, POS, or ecommerce integrations and hands on onboarding support rather than pure API providers.

Concrete example: A 25 location fitness chain switched from an email centric platform to an SMS first solution and recovered 18 percent of no show appointments in the first 60 days. They reduced campaign setup time from three days to a single campaign template and avoided a 40 percent increase in monthly spend by switching from active profile billing to message based bundles.

Common misjudgment: buyers assume omnichannel equals equity of channels. In practice channels have different economics and operational costs. Treat email, SMS, push, and in app messaging as separate products inside your program and measure channel specific ROI before expanding orchestration complexity.

Demo checklist: demand a live walk through of these three items on day one with your data sample 1) show how consent and opt out are stored and exported 2) demonstrate a pricing scenario for your projected monthly volume 3) run a sample audience through the platform to show real time segmentation and message rendering

When to pick a specialist vs a generalist: go specialist if your business depends on one dominant channel and you need speed to value. Choose a generalist if you require deeply integrated cross channel journeys and have the team to operate them. Specialists often win for local, appointment based, and SMS heavy workflows; generalists win when you need cross channel experimentation at scale.

For pragmatic next steps: 1) map your channel mix and expected monthly volumes, 2) run the vendor demo checklist above with your data, and 3) ask for a time boxed pilot that includes exportable consent and message logs.