If your website still funnels visitors into long, impersonal forms, you are leaving conversions on the table; conversational AI conversion rate gains come from replacing that friction with context-aware prompts, real-time answers, and progressive profiling. This guide explains Why Conversational AI Is Replacing Static Forms and Funnels and gives a pragmatic 90-day roadmap to design, implement, and measure chat experiences tied to your customer data platform. You will get the specific mechanics, KPIs, and A/B test steps a B2C marketing or product leader can hand to operations to produce measurable uplift.

1. The conversion problem for B2C websites: where static forms and funnels break down

Hard truth: long, one-size-fits-all forms are an engine for abandonment, not a conversion machine. When visitors land with a clear intent – book a class, try a membership, or check product availability – forcing them through a static form creates friction at the moment of intent and kills conversion velocity.

Primary failure mode: forms assume uniform intent. A visitor who wants to see tonight’s class times gets the same fourteen-field membership form as someone researching pricing. That mismatch increases cognitive load and raises the chance they close the tab instead of converting.

Operational gap: slow human follow-up turns warm interest cold. Even with good CRMs, many B2C sites rely on email or manual callbacks that arrive hours or days later. That delay is where high-intent visitors disappear and lifetime value is lost.

Why Conversational AI Is Replacing Static Forms and Funnels

Why it changes the game: conversational AI converts by meeting visitors where they are – with short, contextual prompts, immediate answers, and progressive profiling that only asks for what matters now. Instead of a static, linear funnel, a conversation adapts to signals in real time and routes qualified prospects straight into bookings or human handoff.

Tradeoff to acknowledge: a chat widget is not a plug-and-play fix. Poor intent models or generic responses increase frustration and fragmentation. The real work is mapping high-value intents, connecting those signals to your CDP, and defining crisp escalation rules so the bot raises only actionable leads to agents.

Concrete example: a mid-market fitness club replaced its 12-field trial signup form with a conversational flow that first asks for preferred class date and time, then offers quick replies for membership type. The bot checks availability in real time, books the trial, and pushes that session-level event into the customer data platform so staff can follow up within 15 minutes if needed. The result was a noticeable drop in abandonment on the trial funnel and faster time-to-booking.

- Where static funnels fail: long forms, poor context, delayed human follow-up, and lack of progressive profiling

- What conversational AI delivers: immediate answers, adaptive questioning, real-time routing, and session-level events tied to your CDP

Practical insight: prioritize intent clarity over conversational breadth. Start with 3 to 5 high-value intents – booking, pricing, product availability, and cancellations – and instrument each with success events. Measuring chat engagement alone is misleading; track chat-to-booking or chat-to-purchase conversion to see real impact.

Key stat: chatbots can handle up to 80% of routine customer inquiries – design your bot to capture those routine wins and reserve human agents for high-complexity conversations. See the IBM analysis for more detail IBM chatbot stats.

Next consideration: if you lack event-level analytics or a CDP connection, start there. Conversational AI without identity and event capture is noisy data – you need piece-level integration with platforms like Gleantap to close the loop between chat interactions and revenue.

2. Why Conversational AI Is Replacing Static Forms and Funnels

Direct point: conversational AI replaces static forms because it changes the conversion event from a single, high-friction submission into a sequence of small, value-driven decisions. That change is the core reason you see improvements in conversational AI conversion rate when flows are designed around intent and outcomes rather than fields.

Behavioral reality: visitors expect instant, two-way responses. Recent research shows many consumers prefer messaging to traditional channels, and that preference matters when the alternative is a long form and delayed follow-up. A chat-driven path captures intent in the moment and keeps momentum that static funnels routinely lose.

Practical insight: treat the conversation as a funnel of micro-conversions. Map three measurable micro-conversions per use case (intent signal, qualification, booking or purchase) and instrument each as an event in your CDP. If you measure only widget opens or messages, you will overestimate impact; measure chat-to-booking or chat-to-purchase to see real ROI. Integrate with Gleantap or your CDP so those session events join customer profiles in real time.

How replacement actually works in practice

Mechanics that matter: conversational flows do four things static forms cannot do well at scale: surface immediate answers to reduce uncertainty, ask only the minimum data needed now, escalate high-intent leads to human agents with context, and reengage across channels based on the conversation outcome. That combination shortens time to conversion and improves qualification quality.

Tradeoff to plan for: a bot with broad but shallow coverage increases false positives and frustrates users. It is better to be precise on fewer intents than to deploy a sprawling conversational tree. Expect an initial drop in response accuracy while you tune intents and mappings to your product catalog and scheduling APIs.

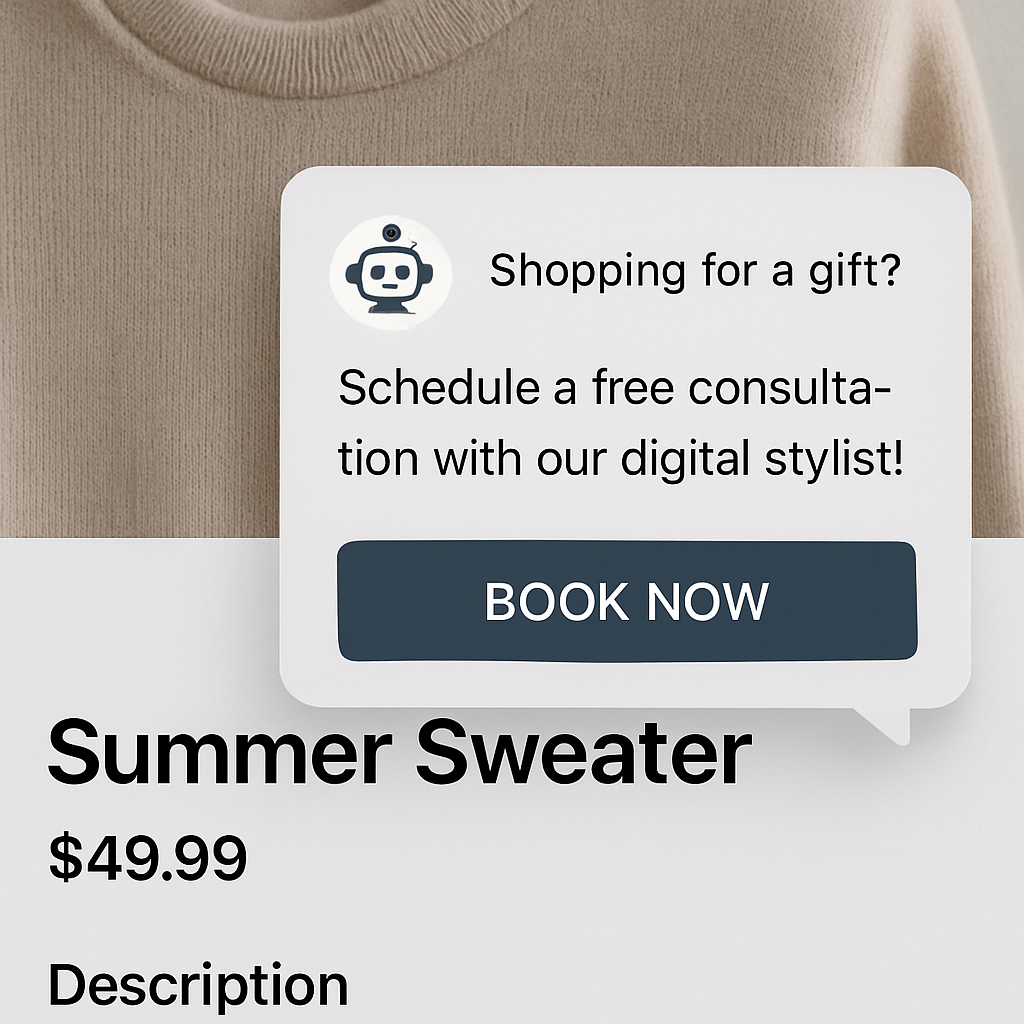

Concrete example: a family entertainment center swapped a multi page ticket form for a chat that asks date, party size, and preferred session in three steps. The bot checks inventory, surfaces add ons like pizza packages, reserves the slot, and writes the booking event to the customer profile. Staff get a near real time notification with the session context, allowing a two minute follow-up when needed, which increased confirmed reservations and reduced no shows.

- Quick wins to replace first: replace exit intent forms, availability checks, FAQ blocks that cause dropout, and cart friction screens with focused conversational paths

- What to instrument immediately: intent label, qualification score, booking event, and escalation trigger

- Avoid this mistake: exposing long legal or sensitive fields inside the chat; capture identity after value is proven and use secure endpoints for sensitive data

Measure conversation-to-conversion, not conversation volume. That metric is the single clearest predictor of real revenue uplift.

Key takeaway: start with a narrow set of high value intents, instrument session events into your CDP, and set clear escalation SLAs. Conversational AI only replaces static funnels when it is both precise and measurable.

3. Five conversion mechanics: exactly how conversational AI moves the needle

Direct claim: Five concrete mechanics explain why improvements in conversational AI conversion rate are repeatable, measurable, and controllable when you build them into the product and analytics stack.

Mechanic 1 — Lowering friction with micro-decisions

What it does: break a single high-friction form into a series of tiny choices and confirmations so visitors convert in short steps rather than one long leap. Result: higher completion and fewer abandonments, because each micro-decision carries less cognitive load.

Mechanic 2 — Personalization at the moment of intent

What it does: combine session signals and customer history from your CDP to serve targeted offers, incentives, or availability. This is not cosmetic personalization — it changes the offer in real time (different trials, urgency windows, or discount framing) to match intent signals.

Mechanic 3 — Progressive commitment and staged identity capture

What it does: ask for the minimum data to complete the immediate outcome, then enrich profile data later through follow-ups. That reduces initial drop-off while still allowing full qualification over time.

Mechanic 4 — Real-time objection handling and qualification

What it does: answer the specific questions that cause visitors to pause — price, availability, safety, or scheduling — and surface qualification signals to route hot leads. This short-circuits hesitation and converts intent into bookings or purchases faster.

Mechanic 5 — Persistent, contextual multi-channel follow-up

What it does: if the on-site interaction doesn’t close, use session context to trigger timed SMS, email, or agent outreach that references the conversation. When follow-up remembers the chat context, conversion velocity and recovery rates rise.

Practical trade-off: prioritizing breadth over depth kills performance. Teams commonly try to train a bot on dozens of intents before validating one or two high-value paths. Focus on instrumenting success events for 2–3 intents first, then expand. Also, personalization requires reliable identity resolution in your CDP; without it, tailored offers will misfire and reduce trust.

Concrete example: A regional urgent-care chain implemented a triage path that captures symptoms in three quick prompts, suggests nearest available slots, and requests contact details only after a slot is selected. The bot writes the booking event into the CDP and triggers a two-minute callback only for red-flag cases, which raised confirmed appointment rates and cut unnecessary agent time.

- Implementation priority: instrument the micro-conversion that equals revenue (chat-to-booking or chat-to-purchase) before optimizing NLP accuracy.

- Measurement rule: track conversation-to-conversion rather than widget opens or message counts; that aligns optimization to business outcomes.

- Integration note: connect session events to your CDP (for example, see Gleantap platform) so personalization and follow-up use the same identity graph.

40% of users don’t care whether a human or a bot helps them as long as the problem is solved; design for speed and clarity first, then for voice and personality. Business Insider

Key judgment: start by fixing the flow that delivers immediate revenue. Improving conversational AI performance without a CDP link or event-level tracking buys you higher engagement metrics but not higher revenue.

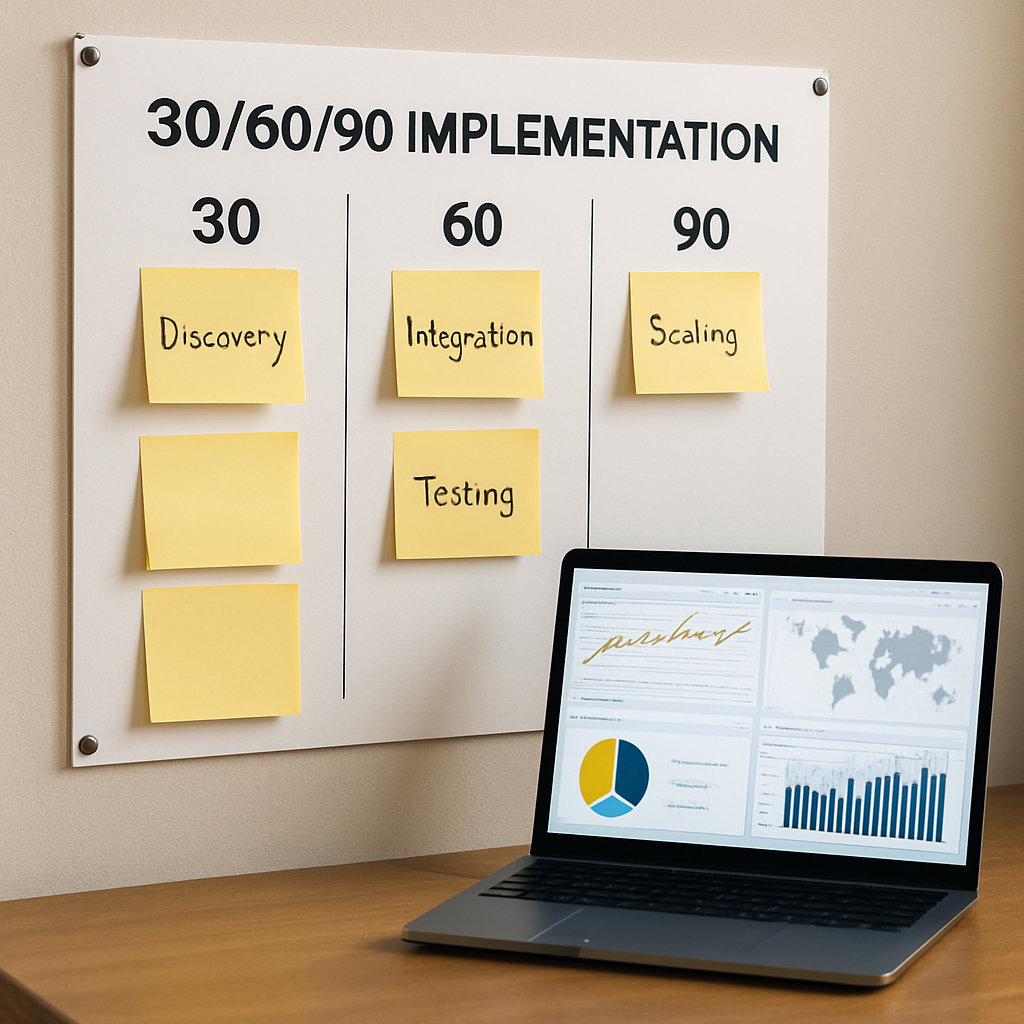

4. Implementation roadmap for B2C teams (30, 60, 90 day plan)

Direct start: Launch conversational AI as a targeted experiment, not a full-site replacement. The fastest wins come from fixing one high-friction revenue path and instrumenting it end-to-end so you can measure conversational AI conversion rate against the existing form-based experience.

Days 0–30: discovery, baselines, and low-risk prototypes

Set a narrow scope: pick a single page or flow that drives revenue (membership checkout, class booking, or product detail). Map the current drop-off points and record baseline metrics in your CDP for chat-initiated sessions, timetoconversion, and lead quality. Connect at least one event stream from the site into Gleantap platform or your CDP so session events join profiles in real time.

Prototype quickly: build a minimal conversational path that achieves the immediate outcome in 3 interactions or fewer. Design the flow to capture just one required field up front, then stage follow-up questions after the booking or purchase is confirmed. Instrument micro-conversion events at each step so you can attribute lift precisely.

Days 31–60: build, integrate, and validate

Integration work: connect booking, inventory, or checkout APIs and implement identity stitching so the bot can personalize offers using known profile signals. Define escalation criteria that create a single, structured handoff payload for human agents to avoid contextless transfers.

Quality first: focus on intent precision for 2–3 high-value intents. Tune utterances and fallback responses, but accept imperfect NLP accuracy during this phase; the priority is reliable event capture and correct routing. If personalization misfires because identity resolution is weak, it will harm conversion more than help.

Days 61–90: test, optimize, and scale

A/B test against the control: run a controlled experiment comparing the conversational path to your existing form for a representative traffic slice. Measure chat-to-booking or chat-to-purchase conversion, time to conversion, and lead-to-customer conversion rate in your CDP. Do not optimize for engagement metrics alone.

Operationalize: finalize SLA for human handoffs (for example, <48 minute first response for escalations), train agents on the handoff payload, and deploy automated follow-ups via SMS/email triggered from the conversation outcome. After the test proves positive, scale the flow to adjacent pages and intents in controlled waves.

- Minimum technical checklist: set event schema, session stitching, and real-time API hooks so booking events write to the CDP.

- Privacy and consent: implement explicit consent capture on entry when required and ensure any sensitive inputs use secure endpoints and retention policies.

- Monitoring: create alerts for conversion drops, high fallback rates, and latency spikes; watch escalation queue length to avoid operational overload.

- Analytics alignment: map conversational events to the same revenue funnel in your analytics so chat-driven revenue is not siloed.

Concrete example: A regional wellness studio replaced a 10-field sign-up form on its trial page with a chat that asks date, preferred class type, and preferred time in three clicks, then reserves the slot and requests contact details only after availability is confirmed. The studio wrote the booking event into their CDP, triggered an SMS reminder, and routed only ambiguous cases to staff—this shortened their booking path and freed agents to focus on higher-value conversations.

Important trade-off: speed to market versus depth of coverage. Deploy narrow, measurable flows quickly; broad conversational coverage is tempting but dilutes data and increases false positives. Build credibility with measurable wins before expanding intents.

Practical judgment: teams that skip the CDP integration or fail to instrument micro-conversions end up with higher chatbot engagement but no revenue signal. Prioritize end-to-end attribution and a 60–90 day test window to reach meaningful conclusions. If the experiment fails, iterate the flow or revert to the control; poor chat experiences damage brand trust faster than a slightly worse form.

Next consideration: remember why conversational AI is replacing static forms and funnels: it turns monolithic submissions into measurable, incremental decisions. Use that shift to align experiments to revenue events, not to vanity engagement metrics.

5. Measurement and KPIs: what to track and sample dashboard queries

Direct point: measurement must link each conversational interaction to a revenue outcome, otherwise you are optimizing for busyness not business. The shift described in Why Conversational AI Is Replacing Static Forms and Funnels is only valuable when you can prove chat-driven sessions produce more bookings, purchases, or qualified leads than the form they replace.

Core metrics and how to interpret them

| Metric | What it measures | Why it matters / how to compute |

| Conversation-to-conversion rate | Share of chat sessions that end in a booking or purchase | Count sessions with eventtype = conversationstart where a downstream booking or purchase event occurs within 48 hours, divided by total conversation sessions |

| Time-to-conversion (median) | Speed from first message to revenue event | Median(bookingtimestamp – conversationstart_timestamp); short times indicate effective qualification and low friction |

| Qualified lead yield | Proportion of conversations that meet your lead quality rules | Qualified leads / total conversations; use your CDP rules (e.g., intent score > 0.7 and contact verified) |

| Flow drop-off index | Where users exit within the conversational path | Event sequence counts by step (step 1 -> step 2 -> step 3) to pinpoint the highest abandonment step |

| Multi-channel recovery lift | Incremental bookings recovered by follow-up (SMS/email) after an unfinished chat | Bookings that reference a prior conversation_id within 7 days vs bookings without prior conversation |

Practical limitation: small sites will hit statistical noise quickly. If you get fewer than a few hundred chat sessions per month on the tested page, conversion-rate swings will be unreliable. In those cases prioritize absolute booking lift and time-to-conversion over percentage-based claims.

Attribution judgment: use the conversation as a primary touch if it contains the decision signal (selected slot, paid checkout). Do not double-credit both widget open and last-click; pick the event that represents user intent completion and map it into your CDP as the revenue event.

Sample SQL queries (Postgres-style) to power dashboards

Chat-initiated bookings:

SELECT COUNT(DISTINCT e.sessionid) AS bookingsfrom_chat

FROM events e

JOIN events b ON b.userid = e.userid

AND b.event_type = booking

AND b.timestamp BETWEEN e.timestamp AND e.timestamp + INTERVAL 48 hours

WHERE e.eventtype = conversationstart;

Conversation-to-conversion rate:

WITH conv AS (

SELECT sessionid, MIN(timestamp) AS startts

FROM events WHERE eventtype = conversationstart GROUP BY session_id

), book AS (

SELECT sessionid FROM events WHERE eventtype = booking

)

SELECT (COUNT(book.sessionid)::decimal / COUNT(conv.sessionid)) * 100 AS convtobooking_pct

FROM conv LEFT JOIN book ON conv.sessionid = book.sessionid;

Median time to conversion (minutes):

SELECT percentiledisc(0.5) WITHIN GROUP (ORDER BY EXTRACT(EPOCH FROM (b.timestamp – c.timestamp))/60) AS medianminutes

FROM events c JOIN events b ON c.sessionid = b.sessionid

WHERE c.eventtype = conversationstart AND b.event_type = booking;

Drop-off by step (funnel snapshot):

SELECT stepname, COUNT(DISTINCT sessionid) AS users

FROM conversation_steps

WHERE conversationid IN (SELECT id FROM conversations WHERE createdat >= now() – INTERVAL 30 days)

GROUP BY step_name ORDER BY users DESC;

Intent performance (which paths convert):

SELECT intent, COUNT(DISTINCT sessionid) AS sessions, SUM(CASE WHEN bookingid IS NOT NULL THEN 1 ELSE 0 END) AS bookings,

(SUM(CASE WHEN bookingid IS NOT NULL THEN 1 ELSE 0 END)/COUNT(DISTINCT sessionid))::decimal AS conv_rate

FROM conversationevents WHERE createdat >= now() – INTERVAL 30 days GROUP BY intent ORDER BY conv_rate DESC;

Concrete example: A regional yoga studio instrumented the exact queries above and built a dashboard showing intent-level conversion. They discovered the schedule-check intent converted at three times the rate of general pricing questions, so they forked a focused flow for schedule-check and routed those sessions directly to live booking. The result: faster bookings and fewer agent escalations, measured directly in their CDP.

- Dashboard widgets to include: KPI cards for conversation-driven revenue, median time-to-booking, and qualified lead yield

- Operational views: active escalation queue length, average agent first-response, and fallback-rate by intent

- Trend analyses: 7/30/90 day comparisons and channel-attribution (chat origin vs. organic) to spot regressions

Key rule: prioritize event accuracy over fancy ML reports. If your conversation_start or booking events are mis-tagged, every derived KPI is garbage. Validate the event stream against manual session samples before trusting automated alerts.

Integration note: push these events into your CDP so conversation context shows up on profiles. For teams using Gleantap, map conversation_start, intent, booking, and escalation into the same identity graph so follow-up flows and attribution work without manual reconciliation. See Gleantap platform for event mapping examples.

Next consideration: run a controlled 60–90 day experiment instrumented with these queries and treat the outcome as an operational gate — if conversation-driven revenue and time-to-booking do not improve, iterate the flow or reallocate resources to different high-intent pages rather than expanding coverage blindly.

6. Real-world examples and comparable case studies

Straight to the point: case studies show conversational AI increases conversion when the experiment aligns product, measurement, and operations — but the size and durability of that lift depend on how you compare results and what you do after the bot converts someone. Mentioning Why Conversational AI Is Replacing Static Forms and Funnels matters because many wins come from changing the conversion event, not from adding a widget.

What to compare across case studies

Comparison checklist: ensure each case you read matches these attributes before trusting headline numbers: traffic source parity (paid vs organic), attribution window used (24 hours, 7 days), whether the bot had backend integrations (inventory, booking APIs), and operational SLAs for human handoff. If a case omits any of these, its conversion claims are likely overstated or not applicable to your setup.

Practical trade-off: many vendors highlight immediate conversion lift but omit the operational consequence. Higher booking volume without adjusted staffing or inventory rules creates canceled reservations and returns the user experience to square one. Plan capacity and cancellation policies before scaling a winning flow.

Three brief, comparable examples (what they actually teach you)

Retail discovery — Sephora-style: retail conversational experiences that combine product discovery with instant inventory checks convert better on product detail pages than static add-to-cart forms because they remove uncertainty. The practical lesson is to integrate the bot with your SKU and stock endpoints so recommendations are actionable instead of aspirational. See vendor reports such as Intercom for similar retail implementations.

Healthcare scheduling — Cleveland Clinic-style: triage and scheduling bots reduce manual queueing and accelerate appointment completion when they include clear consent capture and strict data handling. The main limitation is compliance: if your flows record sensitive details without proper safeguards, any conversion gains are legally and operationally fragile. For background on conversational triage, consult Drift State of Conversational Marketing.

SaaS-to-B2C mapping — learnings from Drift and Intercom studies: business-to-business case studies often show shortened qualification cycles; map those lessons to B2C by focusing on high-intent micro-flows (trial booking, class scheduling, checkout help). The tactical shift is to treat the conversation as a qualification gate that outputs a single structured event the CDP can act on.

Concrete example: Hypothetical 12-location fitness club replaced a ten-field trial signup with a three-step conversational flow that checks class availability via API, reserves a slot, and then requests contact details. The club tracked confirmed bookings per week (rather than widget opens), saw a clear increase in confirmed sessions, and used that incremental number to calculate revenue impact using an A/B test. The key operational change: they enforced a one-hour SLA for follow-up on escalations to avoid overbooking and confusion.

- How to translate big-brand wins to mid-market: replicate the integration depth (inventory/booking APIs + CDP writebacks) first; do not chase broad conversational coverage before you can reliably tag the outcome event.

- Attribution nuance: when comparing case studies, normalize the attribution window and the baseline conversion funnel. A bot that pushes users to immediate checkout within the same session is not the same as one that triggers a later email sequence.

- Operational indicator to watch: escalation queue length and first-response SLA. Those metrics predict whether initial conversion gains will stick or deteriorate under load.

Case-study judgment: conversational AI increases conversions only when the backend can honor the promise made in-chat — inventory, scheduling, and timely human follow-up are not optional.

If you want to model ROI quickly: incremental confirmed bookings x conversion-to-paid rate x average revenue per customer = incremental revenue. Use your CDP to pull actual conversion-to-paid rates rather than vendor averages. For implementation examples, see Gleantap platform.

Common misunderstanding: teams assume a higher conversation-to-conversion rate is proof the bot is better. In practice, novelty and targeted traffic can inflate short-term rates. Only a controlled A/B test with consistent traffic slices and the same attribution window proves persistent improvement.

Next consideration: when you evaluate case studies, insist on operational details and measurement parity. If a study ignores handoff SLAs, inventory sync, or CDP event capture, treat its numbers as aspirational not prescriptive.

7. Operational considerations, privacy, and when not to use conversational AI

Bottom line: conversion uplift from chat is conditional — operational capacity and privacy controls determine whether an improvement in conversational AI conversion rate is real and sustainable or a short-lived spike that collapses under operational strain.

Staffing and handoff rules matter more than NLP bells and whistles. Design a single structured handoff payload (intent, session_id, last 3 messages, confidence score) so agents get context and can act within the SLA. Expect the first month to reveal the real workload: escalation volume often outpaces predictions, and slow or contextless handoffs erode conversion gains quickly.

Monitoring and capacity planning are nonnegotiable. Track queue length, first-response SLA, and escalation false-positive rate in real time. Automate capacity limits – if escalations exceed a threshold, route to scheduled callback rather than letting the queue grow, because rising agent latency kills conversion momentum more predictably than poor initial bot accuracy.

Privacy is a gating factor, not an afterthought. For flows that touch health, financial, or other sensitive attributes you must capture explicit consent before collecting details, store sensitive inputs via secure, access-controlled endpoints, and implement retention and deletion policies. If you are subject to HIPAA or GDPR, plan for pseudonymization in your CDP and separate storage for any personally identifying notes the agent might add.

Trade-off to accept: tighter privacy and audit trails increase implementation friction and slightly slow time-to-market, but failing to build them costs you legally and operationally. Err on the side of minimal in-chat capture: collect the outcome needed to complete the transaction and defer sensitive enrichment to secure follow-up.

When not to use conversational AI. Do not deploy a bot when the use case: requires complex legal advice or contract negotiation, mandates human certification on accuracy, handles high-sensitivity personal data without proper safeguards, or your site traffic is too low to achieve statistical significance for the metrics you care about. If you lack event-level analytics and a CDP link (for example, to Gleantap platform), a bot will produce engagement noise rather than measurable conversion lift.

Concrete example: A regional urgent-care group piloted a symptom-checker that initially logged free-text symptom data in the chat transcript and saw strong engagement but ran into compliance audits. They removed symptom capture from the open chat, added an explicit consent screen, and limited the bot to appointment booking plus a secure follow-up form for clinical intake. The revised flow preserved booking velocity while removing regulatory risk — bookings remained measurable in their CDP and agent time dropped because handoffs included structured context.

Operational preflight checklist

- Define escalation payload: a fixed JSON schema agents consume without reading full transcripts

- Set SLAs before launch: first-response and resolution times tied to traffic forecasts

- Data minimization rule: only capture fields required to complete the immediate outcome in-chat

- Retention & access: retention windows, audit logs, and RBAC for sensitive fields

- Testing plan: synthetic peak-load tests and privacy compliance review before A/B testing

- Attribution wiring: map chat events into your CDP so conversation outcomes feed revenue funnels

If you cannot map chat session events into a CDP and prove chat-to-revenue, the conversation will remain an interesting metric, not a business lever.

Operational judgment: start small and instrument end-to-end. A single well-instrumented flow with strict privacy controls and an enforceable SLA gives you credible data on conversational AI performance. Expand only after the CDP shows consistent, attributable lift in chat-driven bookings or purchases.

Next consideration: if your priority is reliably increasing bookings or purchases, invest first in the operational plumbing and privacy design that make measurable gains defensible — Why Conversational AI Is Replacing Static Forms and Funnels only translates into durable revenue when operations and compliance can sustain the increased conversion velocity.

Frequently Asked Questions

Direct start: This FAQ targets the practical questions teams ask once they decide to test conversational AI. It assumes you accept the premise in Why Conversational AI Is Replacing Static Forms and Funnels and want concrete answers about measurement, operations, risk, and next steps.

What uplift can I reasonably expect from conversational AI conversion rate improvements?

Short answer: Expect a measurable uplift, not magic. Many mid-market B2C pilots produce low double digit percentage improvements in bookings or purchases when the bot replaces a high-friction form and the experiment is instrumented end-to-end. The size of lift depends on baseline friction, traffic mix, and how tightly you tie session events to revenue in your CDP.

How exactly does conversational AI cut form abandonment?

Mechanism: It reduces perceived effort by turning one big decision into sequential micro-decisions, answering the questions that stop people, and deferring identity capture until value is proven. The operational trade-off is you must capture the outcome event reliably or you will optimize engagement without capturing revenue.

Which KPIs prove the bot is driving business results?

Focus on outcome metrics: track the share of chat sessions that create an actionable revenue event in your CDP, median time from first message to conversion, and the rate at which escalations become paying customers. Add guardrail metrics like escalation false positive rate and SLA breach percentage so volume gains do not hide operational breakdowns.

How to integrate the bot with my CRM or CDP without breaking existing funnels?

Integration approach: push session-level events and resolved intents into your identity graph in near real time, map those events to existing revenue objects, and use a single handoff payload for human agents. If you use Gleantap, map conversation events to the same profile keys so follow-up flows and attribution work without manual reconciliation.

Are there privacy or regulatory pitfalls I need to plan for?

Be explicit with sensitive flows: implement consent screens for regulated data, minimize in-chat capture of sensitive fields, and store any clinical or financial inputs in secure, auditable endpoints. The trade-off is longer implementation and testing but far lower legal and operational risk.

How long should an A/B test run and what can break the experiment?

Minimum window: run for 60 to 90 days or until you reach a stable sample for your revenue event. Things that break tests include uneven traffic segmentation, seasonality, SLA breaches on escalations, and poor event tagging. If any of those occur, pause the test and fix the instrumentation before drawing conclusions.

Concrete example: A regional retail chain replaced a product page form with a focused chat flow that checked inventory and offered same-day pickup. They routed only confirmed availability sessions to checkout, wrote the pickup reservation into their CDP, and automated a reminder SMS. The pilot increased same-day pickups while agent time spent on inventory questions dropped by half because the bot handled the routine checks.

Testing guardrails: Require a minimum of 300 chat sessions on the tested page, keep escalation false positives below 12 percent, enforce a first-response SLA under 30 minutes for escalations, and set a target uplift threshold before scaling – for example a minimum absolute increase of X confirmed bookings per week relevant to your revenue model.

Practical judgment: Conversational AI raises conversion rates only when measurement and operations are baked in. If the conversation is not mapped to revenue in your CDP, or if escalations are slow and contextless, the short-term engagement gains will not convert into durable revenue. Fix the identity and event plumbing first, then tune language and routing.

- Immediate actions: Instrument a single high-friction page with a 3-step flow, map conversation events into Gleantap platform, and set an SLA for escalations before you launch the test

- Operational next step: Run a 60 day A/B test focused on chat-to-booking or chat-to-purchase and monitor guardrail metrics in real time

- If results lag: tighten intent definitions, reduce breadth of coverage, and verify event tagging with manual session sampling

Written by

Marcus Webb

Marcus is a B2C marketing strategist with over 8 years of experience in lifecycle marketing, SMS campaigns, and customer retention. He specialises in helping multi-location businesses reduce churn and build long-term customer loyalty.

Recent blog posts

Back to blogReady to Run Successful Marketing Campaigns and Grow Your Business?

Gleantap helps you unify customer data, track behavior patterns, and automate personalized campaigns, so you can increase repeat purchases and grow your business.

Ready to Run Successful Marketing Campaigns and Grow Your Business?

Gleantap helps you unify customer data, track behavior patterns, and automate personalized campaigns, so you can increase repeat purchases and grow your business.